WARNING: Version 1.5 of Elasticsearch has passed its EOL date.

This documentation is no longer being maintained and may be removed. If you are running this version, we strongly advise you to upgrade. For the latest information, see the current release documentation.

Function Score Query

editFunction Score Query

editThe function_score allows you to modify the score of documents that are

retrieved by a query. This can be useful if, for example, a score

function is computationally expensive and it is sufficient to compute

the score on a filtered set of documents.

function_score provides the same functionality that

custom_boost_factor, custom_score and

custom_filters_score provided

but with additional capabilities such as distance and recency scoring (see description below).

Using function score

editTo use function_score, the user has to define a query and one or

several functions, that compute a new score for each document returned

by the query.

function_score can be used with only one function like this:

"function_score": {

"(query|filter)": {},

"boost": "boost for the whole query",

"FUNCTION": {},

"boost_mode":"(multiply|replace|...)"

}

Furthermore, several functions can be combined. In this case one can optionally choose to apply the function only if a document matches a given filter:

"function_score": {

"(query|filter)": {},

"boost": "boost for the whole query",

"functions": [

{

"filter": {},

"FUNCTION": {},

"weight": number

},

{

"FUNCTION": {}

},

{

"filter": {},

"weight": number

}

],

"max_boost": number,

"score_mode": "(multiply|max|...)",

"boost_mode": "(multiply|replace|...)",

"min_score" : number

}

If no filter is given with a function this is equivalent to specifying

"match_all": {}

First, each document is scored by the defined functions. The parameter

score_mode specifies how the computed scores are combined:

|

|

scores are multiplied (default) |

|

|

scores are summed |

|

|

scores are averaged |

|

|

the first function that has a matching filter is applied |

|

|

maximum score is used |

|

|

minimum score is used |

Because scores can be on different scales (for example, between 0 and 1 for decay functions but arbitrary for field_value_factor) and also because sometimes a different impact of functions on the score is desirable, the score of each function can be adjusted with a user defined weight (). The weight can be defined per function in the functions array (example above) and is multiplied with the score computed by the respective function.

If weight is given without any other function declaration, weight acts as a function that simply returns the weight.

The new score can be restricted to not exceed a certain limit by setting

the max_boost parameter. The default for max_boost is FLT_MAX.

The newly computed score is combined with the score of the

query. The parameter boost_mode defines how:

|

|

query score and function score is multiplied (default) |

|

|

only function score is used, the query score is ignored |

|

|

query score and function score are added |

|

|

average |

|

|

max of query score and function score |

|

|

min of query score and function score |

Added in 1.5.0.

By default, modifying the score does not change which documents match. To exclude

documents that do not meet a certain score threshold the min_score parameter can be set to the desired score threshold.

Score functions

editThe function_score query provides several types of score functions.

Script score

editThe script_score function allows you to wrap another query and customize

the scoring of it optionally with a computation derived from other numeric

field values in the doc using a script expression. Here is a

simple sample:

"script_score" : {

"script" : "_score * doc['my_numeric_field'].value"

}

On top of the different scripting field values and expression, the

_score script parameter can be used to retrieve the score based on the

wrapped query.

Scripts are cached for faster execution. If the script has parameters that it needs to take into account, it is preferable to reuse the same script, and provide parameters to it:

"script_score": {

"lang": "lang",

"params": {

"param1": value1,

"param2": value2

},

"script": "_score * doc['my_numeric_field'].value / pow(param1, param2)"

}

Note that unlike the custom_score query, the

score of the query is multiplied with the result of the script scoring. If

you wish to inhibit this, set "boost_mode": "replace"

Weight

editThe weight score allows you to multiply the score by the provided

weight. This can sometimes be desired since boost value set on

specific queries gets normalized, while for this score function it does

not.

"weight" : number

Random

editThe random_score generates scores using a hash of the _uid field,

with a seed for variation. If seed is not specified, the current

time is used.

Using this feature will load field data for _uid, which can

be a memory intensive operation since the values are unique.

"random_score": {

"seed" : number

}

Field Value factor

editThe field_value_factor function allows you to use a field from a document to

influence the score. It’s similar to using the script_score function, however,

it avoids the overhead of scripting. If used on a multi-valued field, only the

first value of the field is used in calculations.

As an example, imagine you have a document indexed with a numeric popularity

field and wish to influence the score of a document with this field, an example

doing so would look like:

"field_value_factor": {

"field": "popularity",

"factor": 1.2,

"modifier": "sqrt"

}

Which will translate into the following formula for scoring:

sqrt(1.2 * doc['popularity'].value)

There are a number of options for the field_value_factor function:

| Parameter | Description |

|---|---|

|

Field to be extracted from the document. |

|

Optional factor to multiply the field value with, defaults to 1. |

|

Modifier to apply to the field value, can be one of: |

Keep in mind that taking the log() of 0, or the square root of a negative number

is an illegal operation, and an exception will be thrown. Be sure to limit the

values of the field with a range filter to avoid this, or use log1p and

ln1p.

Decay functions

editDecay functions score a document with a function that decays depending on the distance of a numeric field value of the document from a user given origin. This is similar to a range query, but with smooth edges instead of boxes.

To use distance scoring on a query that has numerical fields, the user

has to define an origin and a scale for each field. The origin

is needed to define the “central point” from which the distance

is calculated, and the scale to define the rate of decay. The

decay function is specified as

"DECAY_FUNCTION": {

"FIELD_NAME": {

"origin": "11, 12",

"scale": "2km",

"offset": "0km",

"decay": 0.33

}

}

where DECAY_FUNCTION can be "linear", "exp" and "gauss" (see below). The specified field must be a numeric field. In the above example, the field is a Geo Point Type and origin can be provided in geo format. scale and offset must be given with a unit in this case. If your field is a date field, you can set scale and offset as days, weeks, and so on. Example:

"DECAY_FUNCTION": {

"FIELD_NAME": {

"origin": "2013-09-17",

"scale": "10d",

"offset": "5d",

"decay" : 0.5

}

}

The format of the origin depends on the Date Format defined in your mapping. If you do not define the origin, the current time is used.

The offset and decay parameters are optional.

|

|

If an |

|

|

The |

In the first example, your documents might represents hotels and contain a geo location field. You want to compute a decay function depending on how far the hotel is from a given location. You might not immediately see what scale to choose for the gauss function, but you can say something like: "At a distance of 2km from the desired location, the score should be reduced by one third." The parameter "scale" will then be adjusted automatically to assure that the score function computes a score of 0.5 for hotels that are 2km away from the desired location.

In the second example, documents with a field value between 2013-09-12 and 2013-09-22 would get a weight of 1.0 and documents which are 15 days from that date a weight of 0.5.

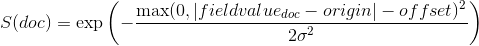

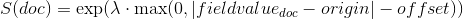

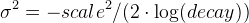

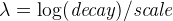

The DECAY_FUNCTION determines the shape of the decay:

|

|

Normal decay, computed as:

|

where  is computed to assure that the score takes the value

is computed to assure that the score takes the value decay at distance scale from origin+-offset

|

|

Exponential decay, computed as:

|

where again the parameter  is computed to assure that the score takes the value

is computed to assure that the score takes the value decay at distance scale from origin+-offset

|

|

Linear decay, computed as:

|

where again the parameter s is computed to assure that the score takes the value decay at distance scale from origin+-offset

In contrast to the normal and exponential decay, this function actually sets the score to 0 if the field value exceeds twice the user given scale value.

Multiple values:

editIf a field used for computing the decay contains multiple values, per default the value closest to the origin is chosen for determining the distance.

This can be changed by setting multi_value_mode.

|

|

Distance is the minimum distance |

|

|

Distance is the maximum distance |

|

|

Distance is the average distance |

|

|

Distance is the sum of all distances |

Example:

"DECAY_FUNCTION": {

"FIELD_NAME": {

"origin": ...,

"scale": ...

},

"multi_value_mode": "avg"

}

Detailed example

editSuppose you are searching for a hotel in a certain town. Your budget is limited. Also, you would like the hotel to be close to the town center, so the farther the hotel is from the desired location the less likely you are to check in.

You would like the query results that match your criterion (for example, "hotel, Nancy, non-smoker") to be scored with respect to distance to the town center and also the price.

Intuitively, you would like to define the town center as the origin and

maybe you are willing to walk 2km to the town center from the hotel.

In this case your origin for the location field is the town center

and the scale is ~2km.

If your budget is low, you would probably prefer something cheap above something expensive. For the price field, the origin would be 0 Euros and the scale depends on how much you are willing to pay, for example 20 Euros.

In this example, the fields might be called "price" for the price of the hotel and "location" for the coordinates of this hotel.

The function for price in this case would be

"DECAY_FUNCTION": {

"price": {

"origin": "0",

"scale": "20"

}

}

and for location:

"DECAY_FUNCTION": {

"location": {

"origin": "11, 12",

"scale": "2km"

}

}

where DECAY_FUNCTION can be "linear", "exp" and "gauss".

Suppose you want to multiply these two functions on the original score, the request would look like this:

curl 'localhost:9200/hotels/_search/' -d '{

"query": {

"function_score": {

"functions": [

{

"DECAY_FUNCTION": {

"price": {

"origin": "0",

"scale": "20"

}

}

},

{

"DECAY_FUNCTION": {

"location": {

"origin": "11, 12",

"scale": "2km"

}

}

}

],

"query": {

"match": {

"properties": "balcony"

}

},

"score_mode": "multiply"

}

}

}'

Next, we show how the computed score looks like for each of the three possible decay functions.

Normal decay, keyword gauss

editWhen choosing gauss as the decay function in the above example, the

contour and surface plot of the multiplier looks like this:

Suppose your original search results matches three hotels :

- "Backback Nap"

- "Drink n Drive"

- "BnB Bellevue".

"Drink n Drive" is pretty far from your defined location (nearly 2 km) and is not too cheap (about 13 Euros) so it gets a low factor a factor of 0.56. "BnB Bellevue" and "Backback Nap" are both pretty close to the defined location but "BnB Bellevue" is cheaper, so it gets a multiplier of 0.86 whereas "Backpack Nap" gets a value of 0.66.

Exponential decay, keyword exp

editWhen choosing exp as the decay function in the above example, the

contour and surface plot of the multiplier looks like this:

Linear' decay, keyword linear

editWhen choosing linear as the decay function in the above example, the

contour and surface plot of the multiplier looks like this:

Supported fields for decay functions

editOnly single valued numeric fields, including time and geo locations, are supported.

What if a field is missing?

editIf the numeric field is missing in the document, the function will return 1.

Relation to custom_boost, custom_score and custom_filters_score

editThe custom_boost_factor query

"custom_boost_factor": {

"boost_factor": 5.2,

"query": {...}

}

becomes

"function_score": {

"weight": 5.2,

"query": {...}

}

The custom_score query

"custom_score": {

"params": {

"param1": 2,

"param2": 3.1

},

"query": {...},

"script": "_score * doc['my_numeric_field'].value / pow(param1, param2)"

}

becomes

"function_score": {

"boost_mode": "replace",

"query": {...},

"script_score": {

"params": {

"param1": 2,

"param2": 3.1

},

"script": "_score * doc['my_numeric_field'].value / pow(param1, param2)"

}

}

and the custom_filters_score

"custom_filters_score": {

"filters": [

{

"boost": "3",

"filter": {...}

},

{

"filter": {...},

"script": "_score * doc['my_numeric_field'].value / pow(param1, param2)"

}

],

"params": {

"param1": 2,

"param2": 3.1

},

"query": {...},

"score_mode": "first"

}

becomes:

"function_score": {

"functions": [

{

"weight": "3",

"filter": {...}

},

{

"filter": {...},

"script_score": {

"params": {

"param1": 2,

"param2": 3.1

},

"script": "_score * doc['my_numeric_field'].value / pow(param1, param2)"

}

}

],

"query": {...},

"score_mode": "first"

}