Inference APIs

editInference APIs

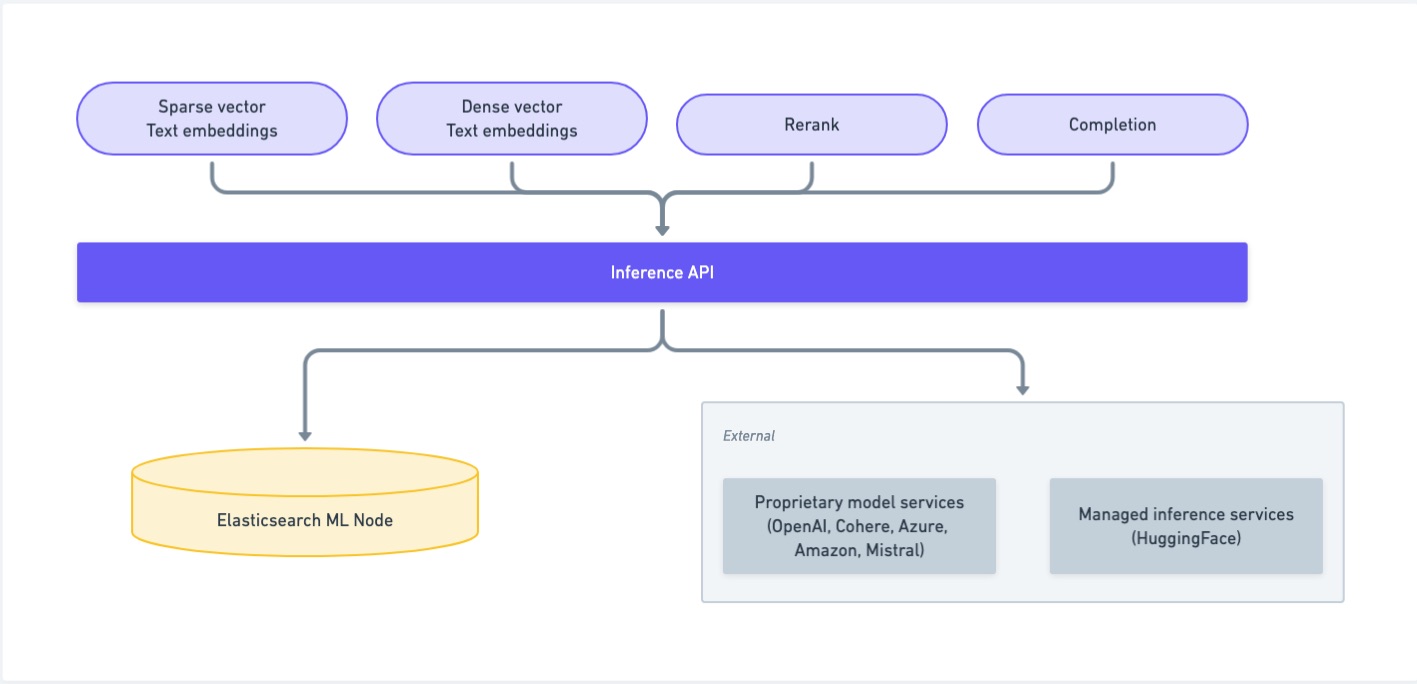

editThe inference APIs enable you to use certain services, such as built-in machine learning models (ELSER, E5), models uploaded through Eland, Cohere, OpenAI, Azure, Google AI Studio or Hugging Face. For built-in models and models uploaded through Eland, the inference APIs offer an alternative way to use and manage trained models. However, if you do not plan to use the inference APIs to use these models or if you want to use non-NLP models, use the Machine learning trained model APIs.

The inference APIs enable you to create inference endpoints and integrate with machine learning models of different services - such as Amazon Bedrock, Anthropic, Azure AI Studio, Cohere, Google AI, Mistral, OpenAI, or HuggingFace. Use the following APIs to manage inference models and perform inference:

An inference endpoint enables you to use the corresponding machine learning model without manual deployment and apply it to your data at ingestion time through semantic text.

Choose a model from your service or use ELSER – a retrieval model trained by Elastic –, then create an inference endpoint by the Create inference API. Now use semantic text to perform semantic search on your data.

Adaptive allocations

editAdaptive allocations allow inference services to dynamically adjust the number of model allocations based on the current load.

When adaptive allocations are enabled:

-

The number of allocations scales up automatically when the load increases.

- Allocations scale down to a minimum of 0 when the load decreases, saving resources.

For more information about adaptive allocations and resources, refer to the trained model autoscaling documentation.

Configuring chunking

editInference endpoints have a limit on the amount of text they can process at once, determined by the model’s input capacity.

Chunking is the process of splitting the input text into pieces that remain within these limits.

It occurs when ingesting documents into semantic_text fields.

Chunking also helps produce sections that are digestible for humans.

Returning a long document in search results is less useful than providing the most relevant chunk of text.

Each chunk will include the text subpassage and the corresponding embedding generated from it.

By default, documents are split into sentences and grouped in sections up to 250 words with 1 sentence overlap so that each chunk shares a sentence with the previous chunk. Overlapping ensures continuity and prevents vital contextual information in the input text from being lost by a hard break.

Elasticsearch uses the ICU4J library to detect word and sentence boundaries for chunking. Word boundaries are identified by following a series of rules, not just the presence of a whitespace character. For written languages that do use whitespace such as Chinese or Japanese dictionary lookups are used to detect word boundaries.

Chunking strategies

editTwo strategies are available for chunking: sentence and word.

The sentence strategy splits the input text at sentence boundaries.

Each chunk contains one or more complete sentences ensuring that the integrity of sentence-level context is preserved, except when a sentence causes a chunk to exceed a word count of max_chunk_size, in which case it will be split across chunks.

The sentence_overlap option defines the number of sentences from the previous chunk to include in the current chunk which is either 0 or 1.

The word strategy splits the input text on individual words up to the max_chunk_size limit.

The overlap option is the number of words from the previous chunk to include in the current chunk.

The default chunking strategy is sentence.

The default chunking strategy for inference endpoints created before 8.16 is word.

Example of configuring the chunking behavior

editThe following example creates an inference endpoint with the elasticsearch service that deploys the ELSER model by default and configures the chunking behavior.

resp = client.inference.put( task_type="sparse_embedding", inference_id="small_chunk_size", inference_config={ "service": "elasticsearch", "service_settings": { "num_allocations": 1, "num_threads": 1 }, "chunking_settings": { "strategy": "sentence", "max_chunk_size": 100, "sentence_overlap": 0 } }, ) print(resp)

const response = await client.inference.put({ task_type: "sparse_embedding", inference_id: "small_chunk_size", inference_config: { service: "elasticsearch", service_settings: { num_allocations: 1, num_threads: 1, }, chunking_settings: { strategy: "sentence", max_chunk_size: 100, sentence_overlap: 0, }, }, }); console.log(response);

PUT _inference/sparse_embedding/small_chunk_size { "service": "elasticsearch", "service_settings": { "num_allocations": 1, "num_threads": 1 }, "chunking_settings": { "strategy": "sentence", "max_chunk_size": 100, "sentence_overlap": 0 } }