- Filebeat Reference: other versions:

- Overview

- Get started

- Set up and run

- Upgrade

- How Filebeat works

- Configure

- Inputs

- General settings

- Project paths

- Config file loading

- Output

- SSL

- Index lifecycle management (ILM)

- Elasticsearch index template

- Kibana endpoint

- Kibana dashboards

- Processors

- Define processors

- add_cloud_metadata

- add_docker_metadata

- add_fields

- add_host_metadata

- add_id

- add_kubernetes_metadata

- add_labels

- add_locale

- add_observer_metadata

- add_process_metadata

- add_tags

- community_id

- convert

- copy_fields

- decode_base64_field

- decode_cef

- decode_csv_fields

- decode_json_fields

- decompress_gzip_field

- dissect

- dns

- drop_event

- drop_fields

- extract_array

- fingerprint

- include_fields

- registered_domain

- rename

- script

- timestamp

- truncate_fields

- Autodiscover

- Internal queue

- Load balancing

- Logging

- HTTP endpoint

- Regular expression support

- filebeat.reference.yml

- How to guides

- Beats central management

- Modules

- Modules overview

- ActiveMQ module

- Apache module

- Auditd module

- AWS module

- Azure module

- CEF module

- Cisco module

- CoreDNS module

- Elasticsearch module

- Envoyproxy Module

- Google Cloud module

- haproxy module

- IBM MQ module

- Icinga module

- IIS module

- Iptables module

- Kafka module

- Kibana module

- Logstash module

- MISP module

- MongoDB module

- MSSQL module

- MySQL module

- nats module

- NetFlow module

- Nginx module

- Osquery module

- Palo Alto Networks module

- PostgreSQL module

- RabbitMQ module

- Redis module

- Santa module

- Suricata module

- System module

- Traefik module

- Zeek (Bro) Module

- Exported fields

- activemq fields

- Apache fields

- Auditd fields

- AWS fields

- Azure fields

- Beat fields

- Decode CEF processor fields fields

- CEF fields

- Cisco fields

- Cloud provider metadata fields

- Coredns fields

- Docker fields

- ECS fields

- elasticsearch fields

- Envoyproxy fields

- Google Cloud fields

- haproxy fields

- Host fields

- ibmmq fields

- Icinga fields

- IIS fields

- iptables fields

- Jolokia Discovery autodiscover provider fields

- Kafka fields

- kibana fields

- Kubernetes fields

- Log file content fields

- logstash fields

- MISP fields

- mongodb fields

- mssql fields

- MySQL fields

- nats fields

- NetFlow fields

- NetFlow fields

- Nginx fields

- Osquery fields

- panw fields

- PostgreSQL fields

- Process fields

- RabbitMQ fields

- Redis fields

- s3 fields

- Google Santa fields

- Suricata fields

- System fields

- Traefik fields

- Zeek fields

- Monitor

- Secure

- Troubleshoot

- Get help

- Debug

- Common problems

- Can’t read log files from network volumes

- Filebeat isn’t collecting lines from a file

- Too many open file handlers

- Registry file is too large

- Inode reuse causes Filebeat to skip lines

- Log rotation results in lost or duplicate events

- Open file handlers cause issues with Windows file rotation

- Filebeat is using too much CPU

- Dashboard in Kibana is breaking up data fields incorrectly

- Fields are not indexed or usable in Kibana visualizations

- Filebeat isn’t shipping the last line of a file

- Filebeat keeps open file handlers of deleted files for a long time

- Filebeat uses too much bandwidth

- Error loading config file

- Found unexpected or unknown characters

- Logstash connection doesn’t work

- @metadata is missing in Logstash

- Not sure whether to use Logstash or Beats

- SSL client fails to connect to Logstash

- Monitoring UI shows fewer Beats than expected

- Contribute to Beats

Azure module

editAzure module

editThis functionality is in beta and is subject to change. The design and code is less mature than official GA features and is being provided as-is with no warranties. Beta features are not subject to the support SLA of official GA features.

The azure module retrieves different types of log data from Azure. There are several requirements before using the module since the logs will actually be read from azure event hubs.

- the logs have to be exported first to the event hubs https://docs.microsoft.com/en-us/azure/event-hubs/event-hubs-create-kafka-enabled

- to export activity logs to event hubs users can follow the steps here https://docs.microsoft.com/en-us/azure/azure-monitor/platform/activity-log-export

- to export audit and sign-in logs to event hubs users can follow the steps here https://docs.microsoft.com/en-us/azure/active-directory/reports-monitoring/tutorial-azure-monitor-stream-logs-to-event-hub

The module contains the following filesets:

-

activitylogs - Will retrieve azure activity logs. Control-plane events on Azure Resource Manager resources. Activity logs provide insight into the operations that were performed on resources in your subscription.

-

signinlogs - Will retrieve azure Active Directory sign-in logs. The sign-ins report provides information about the usage of managed applications and user sign-in activities.

-

auditlogs - Will retrieve azure Active Directory audit logs. The audit logs provide traceability through logs for all changes done by various features within Azure AD. Examples of audit logs include changes made to any resources within Azure AD like adding or removing users, apps, groups, roles and policies.

Module configuration

edit- module: azure

activitylogs:

enabled: true

var:

eventhub: "insights-operational-logs"

consumer_group: "$Default"

connection_string: ""

storage_account: ""

storage_account_key: ""

auditlogs:

enabled: false

var:

eventhub: "insights-logs-auditlogs"

consumer_group: "$Default"

connection_string: ""

storage_account: ""

storage_account_key: ""

signinlogs:

enabled: false

var:

eventhub: ["insights-logs-signinlogs"]

consumer_group: "$Default"

connection_string: ""

storage_account: ""

storage_account_key: ""

-

eventhub -

[]string

Is a fully managed, real-time data ingestion service.

Default value

insights-operational-logs -

consumer_group -

string

The publish/subscribe mechanism of Event Hubs is enabled through consumer groups. A consumer group is a view (state, position, or offset) of an entire event hub. Consumer groups enable multiple consuming applications to each have a separate view of the event stream, and to read the stream independently at their own pace and with their own offsets.

Default value:

$Default -

connection_string - string The connection string required to communicate with Event Hubs, steps here https://docs.microsoft.com/en-us/azure/event-hubs/event-hubs-get-connection-string.

A Blob Storage account is required in order to store/retrieve/update the offset or state of the eventhub messages. This means that after stopping the filebeat azure module it can start back up at the spot that it stopped processing messages.

-

storage_account - string The name of the storage account the state/offsets will be stored and updated.

-

storage_account_key - string The storage account key, this key will be used to authorize access to data in your storage account.

When you run the module, it performs a few tasks under the hood:

- Sets the default paths to the log files (but don’t worry, you can override the defaults)

- Makes sure each multiline log event gets sent as a single event

- Uses ingest node to parse and process the log lines, shaping the data into a structure suitable for visualizing in Kibana

Read the quick start to learn how to set up and run modules.

Compatibility

editTODO: document with what versions of the software is this tested

Dashboards

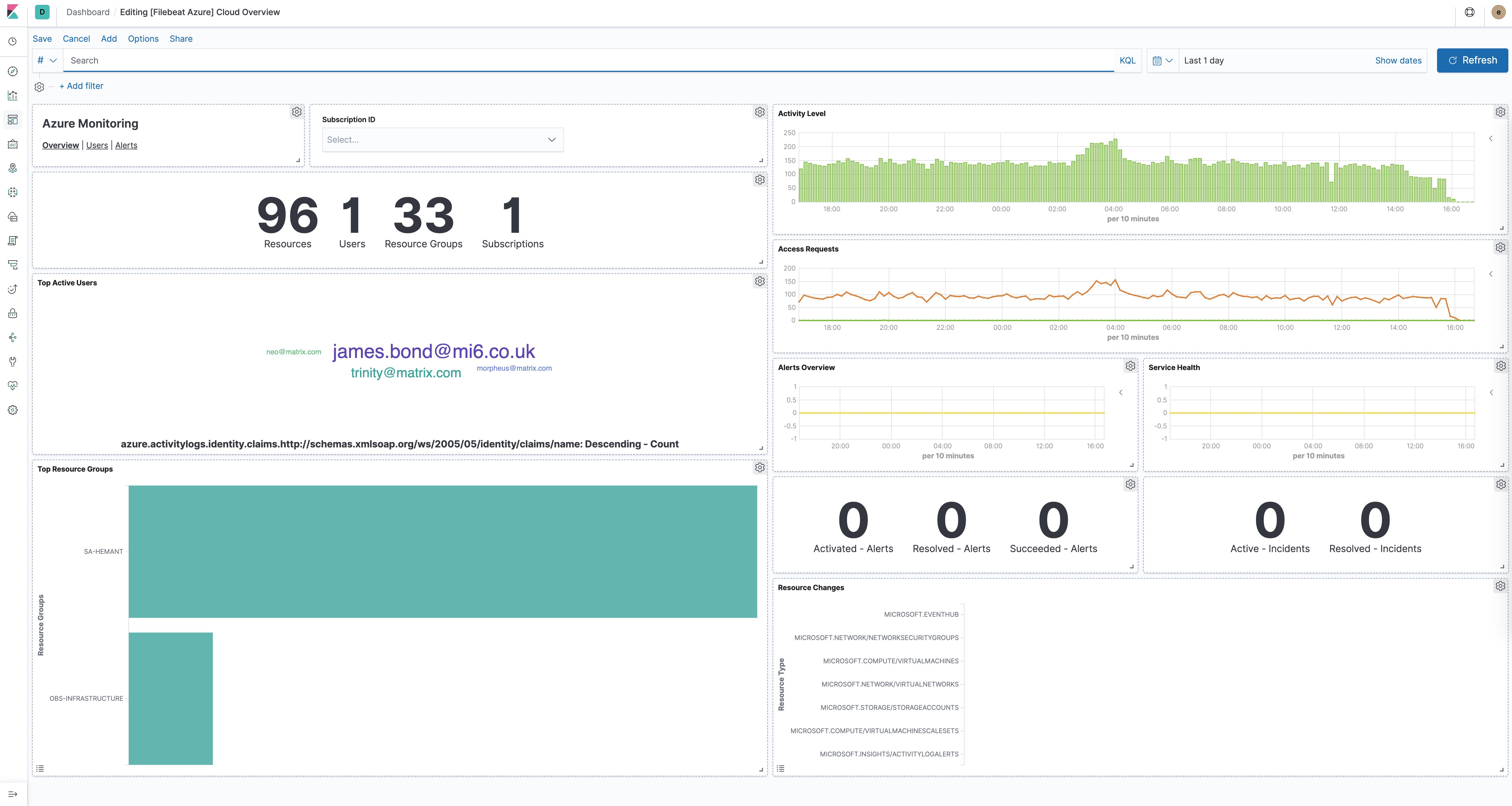

editThe azure module comes with several predefined dashboards for general cloud overview, user activity and alerts. For example:

Fields

editFor a description of each field in the module, see the exported fields section.