Tutorial: Automate rollover with ILM

editTutorial: Automate rollover with ILM

editWhen you continuously index timestamped documents into Elasticsearch, you typically use a data stream so you can periodically roll over to a new index. This enables you to implement a hot-warm-cold architecture to meet your performance requirements for your newest data, control costs over time, enforce retention policies, and still get the most out of your data.

Data streams are best suited for

append-only use cases. If you need to update or delete existing time

series data, you can perform update or delete operations directly on the data stream backing index.

If you frequently send multiple documents using the same _id expecting last-write-wins, you may

want to use an index alias with a write index instead. You can still use ILM to manage and rollover

the alias’s indices. Skip to Manage time series data without data streams.

To automate rollover and management of a data stream with ILM, you:

- Create a lifecycle policy that defines the appropriate phases and actions.

- Create an index template to create the data stream and apply the ILM policy and the indices settings and mappings configurations for the backing indices.

- Verify indices are moving through the lifecycle phases as expected.

For an introduction to rolling indices, see Rollover.

When you enable index lifecycle management for Beats or the Logstash Elasticsearch output plugin, lifecycle policies are set up automatically. You do not need to take any other actions. You can modify the default policies through Kibana Management or the ILM APIs.

Create a lifecycle policy

editA lifecycle policy specifies the phases in the index lifecycle

and the actions to perform in each phase. A lifecycle can have up to five phases:

hot, warm, cold, frozen, and delete.

For example, you might define a timeseries_policy that has two phases:

-

A

hotphase that defines a rollover action to specify that an index rolls over when it reaches either amax_primary_shard_sizeof 50 gigabytes or amax_ageof 30 days. -

A

deletephase that setsmin_ageto remove the index 90 days after rollover.

The min_age value is relative to the rollover time, not the index creation time.

You can create the policy through Kibana or with the create or update policy API. To create the policy from Kibana, open the menu and go to Stack Management > Index Lifecycle Policies. Click Create policy.

API example

response = client.ilm.put_lifecycle( policy: 'timeseries_policy', body: { policy: { phases: { hot: { actions: { rollover: { max_primary_shard_size: '50GB', max_age: '30d' } } }, delete: { min_age: '90d', actions: { delete: {} } } } } } ) puts response

Create an index template to create the data stream and apply the lifecycle policy

editTo set up a data stream, first create an index template to specify the lifecycle policy. Because

the template is for a data stream, it must also include a data_stream definition.

For example, you might create a timeseries_template to use for a future data stream

named timeseries.

To enable the ILM to manage the data stream, the template configures one ILM setting:

-

index.lifecycle.namespecifies the name of the lifecycle policy to apply to the data stream.

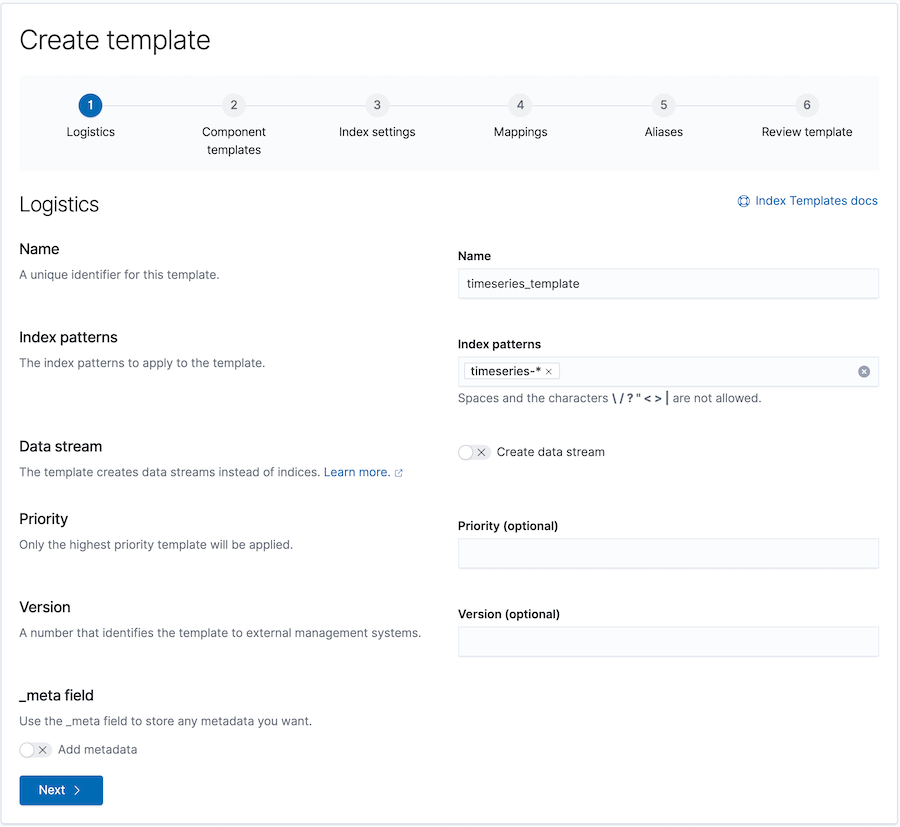

You can use the Kibana Create template wizard to add the template. From Kibana, open the menu and go to Stack Management > Index Management. In the Index Templates tab, click Create template.

This wizard invokes the create or update index template API to create the index template with the options you specify.

API example

response = client.indices.put_index_template( name: 'timeseries_template', body: { index_patterns: [ 'timeseries' ], data_stream: {}, template: { settings: { number_of_shards: 1, number_of_replicas: 1, "index.lifecycle.name": 'timeseries_policy' } } } ) puts response

Create the data stream

editTo get things started, index a document into the name or wildcard pattern defined

in the index_patterns of the index template. As long

as an existing data stream, index, or index alias does not already use the name, the index

request automatically creates a corresponding data stream with a single backing index.

Elasticsearch automatically indexes the request’s documents into this backing index, which also

acts as the stream’s write index.

For example, the following request creates the timeseries data stream and the

first generation backing index called .ds-timeseries-2099.03.08-000001.

POST timeseries/_doc { "message": "logged the request", "@timestamp": "1591890611" }

When a rollover condition in the lifecycle policy is met, the rollover action:

-

Creates the second generation backing index, named

.ds-timeseries-2099.03.08-000002. Because it is a backing index of thetimeseriesdata stream, the configuration from thetimeseries_templateindex template is applied to the new index. -

As it is the latest generation index of the

timeseriesdata stream, the newly created backing index.ds-timeseries-2099.03.08-000002becomes the data stream’s write index.

This process repeats each time a rollover condition is met.

You can search across all of the data stream’s backing indices, managed by the timeseries_policy,

with the timeseries data stream name.

Write operations are routed to the current write index. Read operations will be handled by all

backing indices.

Check lifecycle progress

editTo get status information for managed indices, you use the ILM explain API. This lets you find out things like:

- What phase an index is in and when it entered that phase.

- The current action and what step is being performed.

- If any errors have occurred or progress is blocked.

For example, the following request gets information about the timeseries data stream’s

backing indices:

response = client.ilm.explain_lifecycle( index: '.ds-timeseries-*' ) puts response

GET .ds-timeseries-*/_ilm/explain

The following response shows the data stream’s first generation backing index is waiting for the hot

phase’s rollover action.

It remains in this state and ILM continues to call check-rollover-ready until a rollover condition

is met.

{ "indices": { ".ds-timeseries-2099.03.07-000001": { "index": ".ds-timeseries-2099.03.07-000001", "index_creation_date_millis": 1538475653281, "time_since_index_creation": "30s", "managed": true, "policy": "timeseries_policy", "lifecycle_date_millis": 1538475653281, "age": "30s", "phase": "hot", "phase_time_millis": 1538475653317, "action": "rollover", "action_time_millis": 1538475653317, "step": "check-rollover-ready", "step_time_millis": 1538475653317, "phase_execution": { "policy": "timeseries_policy", "phase_definition": { "min_age": "0ms", "actions": { "rollover": { "max_primary_shard_size": "50gb", "max_age": "30d" } } }, "version": 1, "modified_date_in_millis": 1539609701576 } } } }

|

The age of the index used for calculating when to rollover the index via the |

|

|

The policy used to manage the index |

|

|

The age of the indexed used to transition to the next phase (in this case it is the same with the age of the index). |

|

|

The step ILM is performing on the index |

|

|

The definition of the current phase (the |

Manage time series data without data streams

editEven though data streams are a convenient way to scale and manage time series data, they are designed to be append-only. We recognise there might be use-cases where data needs to be updated or deleted in place and the data streams don’t support delete and update requests directly, so the index APIs would need to be used directly on the data stream’s backing indices. In these cases we still recommend using a data stream.

If you frequently send multiple documents using the same _id expecting last-write-wins, you can

use an index alias instead of a data stream to manage indices containing the time series data and

periodically roll over to a new index.

To automate rollover and management of time series indices with ILM using an index alias, you:

- Create a lifecycle policy that defines the appropriate phases and actions. See Create a lifecycle policy above.

- Create an index template to apply the policy to each new index.

- Bootstrap an index as the initial write index.

- Verify indices are moving through the lifecycle phases as expected.

Create an index template to apply the lifecycle policy

editTo automatically apply a lifecycle policy to the new write index on rollover, specify the policy in the index template used to create new indices.

For example, you might create a timeseries_template that is applied to new indices

whose names match the timeseries-* index pattern.

To enable automatic rollover, the template configures two ILM settings:

-

index.lifecycle.namespecifies the name of the lifecycle policy to apply to new indices that match the index pattern. -

index.lifecycle.rollover_aliasspecifies the index alias to be rolled over when the rollover action is triggered for an index.

You can use the Kibana Create template wizard to add the template. To access the wizard, open the menu and go to Stack Management > Index Management. In the Index Templates tab, click Create template.

The create template request for the example template looks like this:

PUT _index_template/timeseries_template { "index_patterns": ["timeseries-*"], "template": { "settings": { "number_of_shards": 1, "number_of_replicas": 1, "index.lifecycle.name": "timeseries_policy", "index.lifecycle.rollover_alias": "timeseries" } } }

|

Apply the template to a new index if its name starts with |

|

|

The name of the lifecycle policy to apply to each new index. |

|

|

The name of the alias used to reference these indices. Required for policies that use the rollover action. |

Bootstrap the initial time series index with a write index alias

editTo get things started, you need to bootstrap an initial index and designate it as the write index for the rollover alias specified in your index template. The name of this index must match the template’s index pattern and end with a number. On rollover, this value is incremented to generate a name for the new index.

For example, the following request creates an index called timeseries-000001

and makes it the write index for the timeseries alias.

PUT timeseries-000001 { "aliases": { "timeseries": { "is_write_index": true } } }

When the rollover conditions are met, the rollover action:

-

Creates a new index called

timeseries-000002. This matches thetimeseries-*pattern, so the settings fromtimeseries_templateare applied to the new index. - Designates the new index as the write index and makes the bootstrap index read-only.

This process repeats each time rollover conditions are met.

You can search across all of the indices managed by the timeseries_policy with the timeseries alias.

Write operations are routed to the current write index.

Check lifecycle progress

editRetrieving the status information for managed indices is very similar to the data stream case. See the data stream check progress section for more information. The only difference is the indices namespace, so retrieving the progress will entail the following api call:

response = client.ilm.explain_lifecycle( index: 'timeseries-*' ) puts response

GET timeseries-*/_ilm/explain