Elasticsearch has native integrations with the industry-leading Gen AI tools and providers. Check out our webinars on going Beyond RAG Basics, or building prod-ready apps with the Elastic vector database.

To build the best search solutions for your use case, start a free cloud trial or try Elastic on your local machine now.

Search as we currently know it (search bar, results, filters, pages, etc.) has come very far and fulfills many different functionalities. This is especially true when we know the keywords needed to find what we're looking for or when we know which documents contain the information we want. However, when the results are documents with long texts, we need an additional step besides reading and summarizing to get the final answer. So, to make this process easier companies such as Google and their Search Generative Experience (SGE) use AI to complement the search results via AI summaries.

What if I told you you could do the same with Elastic?

In this article, you will learn to create a React component that will display an AI summary answering the user questions along with the search results to help users, answering their questions faster. We will also ask the model to provide citations, so that answers are grounded to the search results.

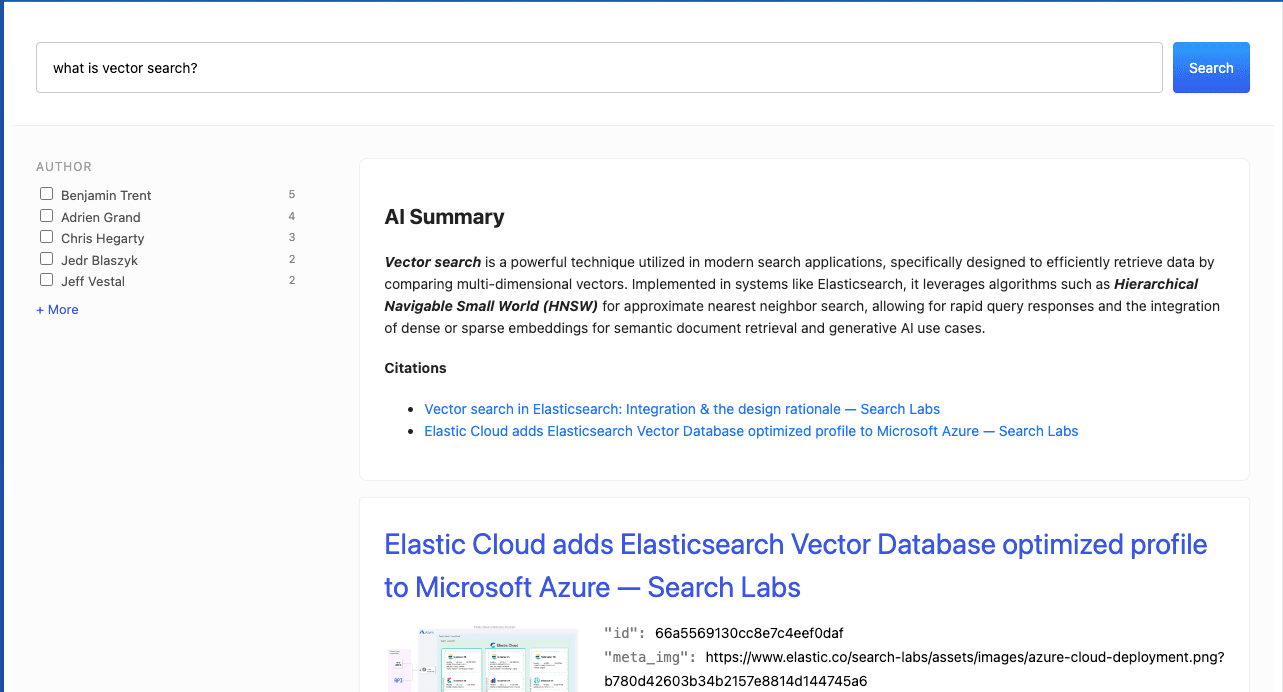

The end results will look like this:

You can find the full working example repository here.

Steps for adding AI summaries to your site with Elastic

Creating endpoints

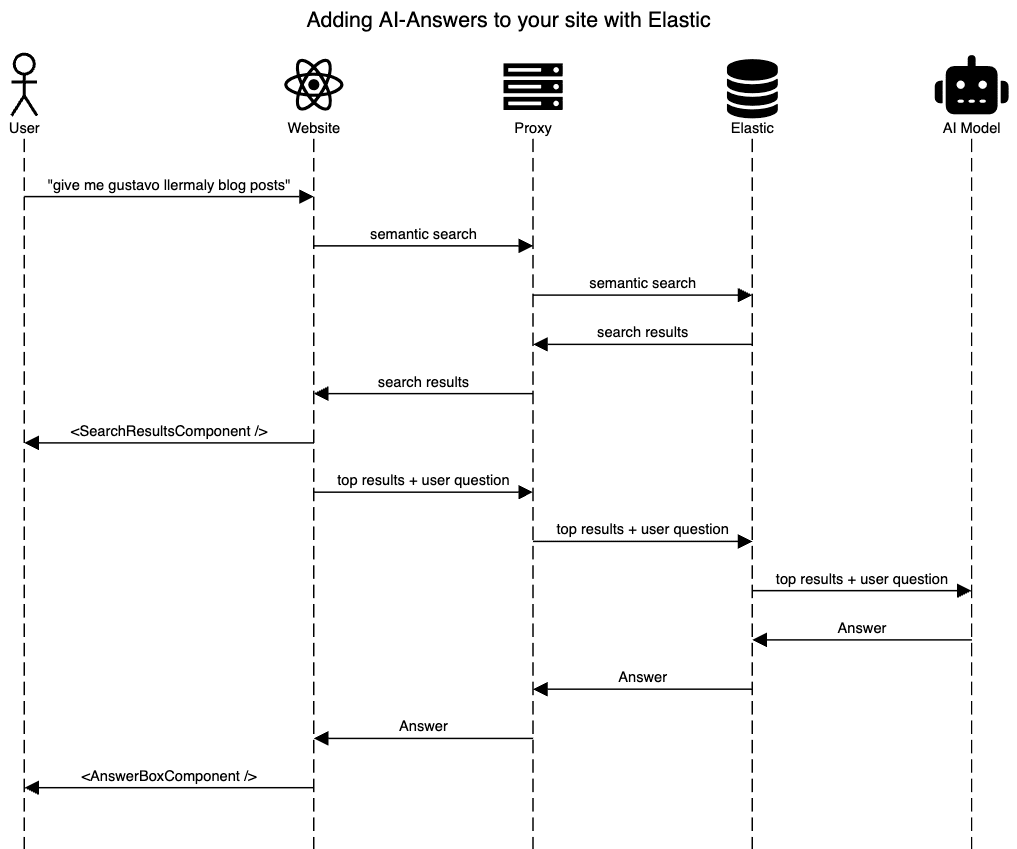

Before creating the endpoints, take a look at the high level architecture for this project.

The recommended approach to consume Elasticsearch from a UI is to proxy the calls, so are going to spin up a backend the UI can connect for that purpose. You can read more about this approach here.

IMPORTANT : The approach outlined in this article provides a simple method for handling Elasticsearch queries and generating summaries. Consider your specific use case and requirements before implementing this solution. A more appropiate architecture would involve doing both search and completion under the same API call behind the proxy.

Embeddings endpoint

To enable semantic search, we are going to use the ELSER model to help us not only to find by words matching but also by semantic meaning.

You can use the Kibana UI to create the ELSER endpoint:

Or via the _inference API:

Completion endpoint

To generate the AI Summary we must send the relevant documents as context and the user query to a model. To do this we create a completion endpoint connecting to OpenAI. You can also choose between a growing list of different providers if you don't want to work with OpenAI.

Every time a user runs a search we are going the call the model, so we need speed and cost efficiency, making this a good opportunity to test the new gpt-4o-mini.

Indexing data

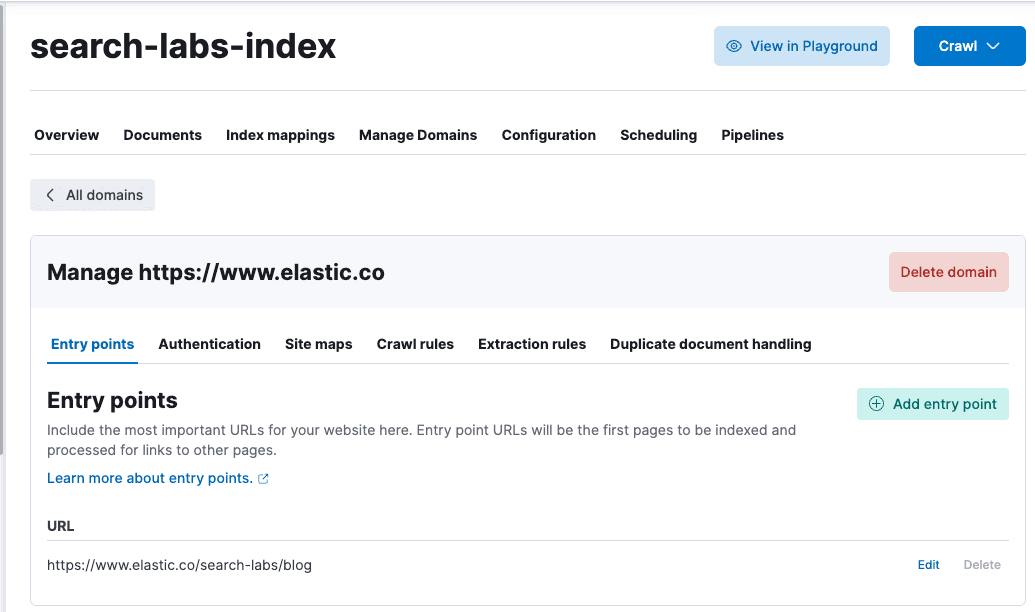

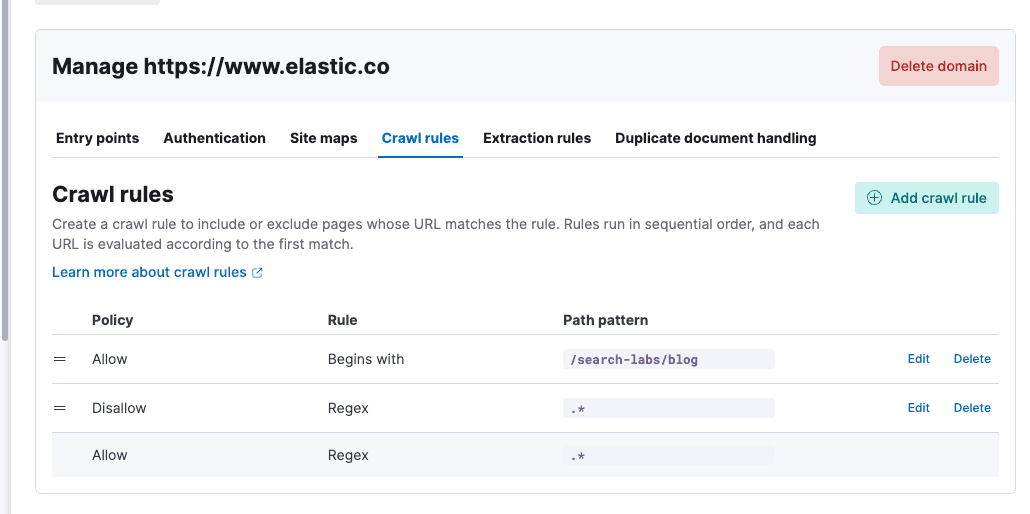

Since we are adding a search experience to our website, we can use Elastic web crawler to index our website content and test with our own documents. For this example I'm going to use Elastic Labs Blog.

To create the crawler, follow the instructions on the docs.

For this example, we will use the following settings:

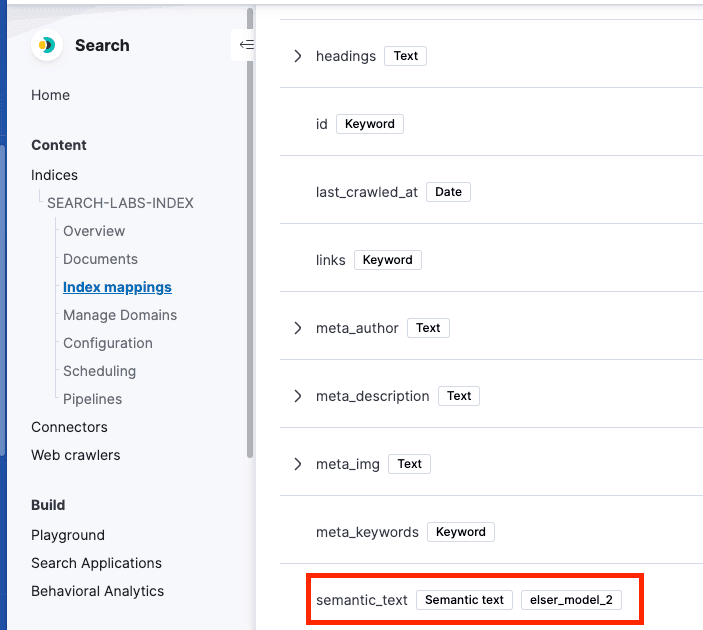

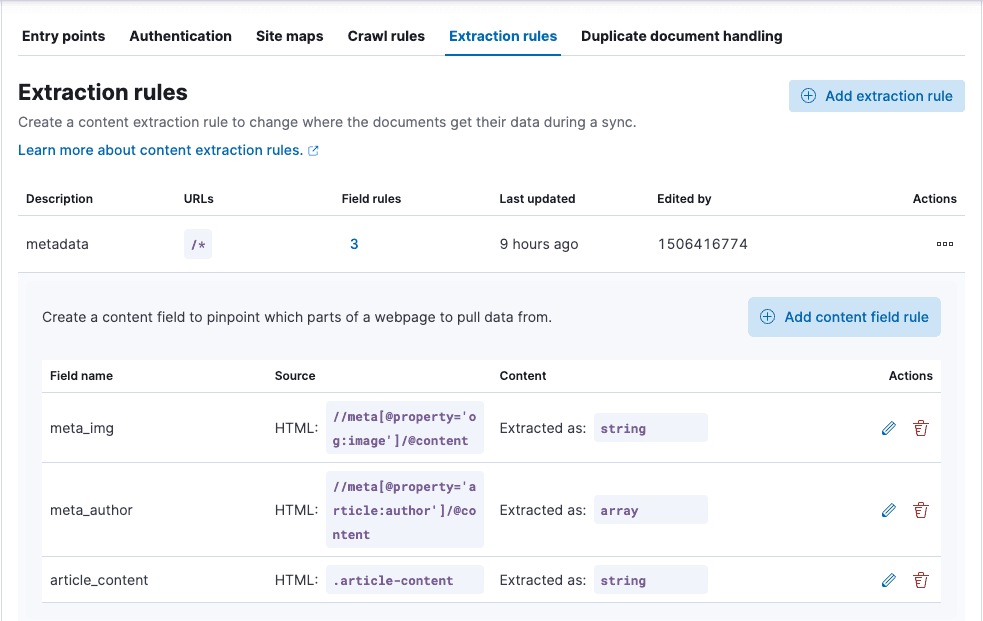

Note: I'm adding some extraction rules to clean the field values. I'm also using the semantic_text field from the crawler, and associating it to the article_content field

A brief explanation of the extracted fields:

meta_img: The article's image used as the thumbnail. meta_author: The author's name, enabling filtering by author. article_content: We index only the main content of the article within the div, excluding unrelated data such as headers and footers. This optimization enhances search relevance and reduces costs by generating shorter embeddings.

This is how a document will look after applying the rules and executing a crawl successfully:

Creating the proxy

To set up the proxy server, we’ll be using express.js. We'll create two endpoints following best practices: one for handling _search calls and another for completion calls.

Begin by creating a new directory called es-proxy, navigate into it using cd es-proxy, and initialize your project with npm init.

Next, install the necessary dependencies with the following command:

yarn add express axios dotenv cors

Here’s a brief explanation of each package:

express: Used to create the proxy server that will handle incoming requests and forward them to Elasticsearch. axios: A popular HTTP client that simplifies making requests to the Elasticsearch API. dotenv: Allows you to manage sensitive data, such as API keys, by storing them in environment variables. cors: Enables your UI to make requests to a different domain (in this case, your proxy server) by handling Cross-Origin Resource Sharing (CORS). This is essential for avoiding issues when your frontend and backend are hosted on different domains or ports.

Now, create an .env file to securely store your Elasticsearch URL and API key:

Make sure the API Key you create is restricted to the needed index and it is read-only

Finally, create an index.js file with the following content:

Now, start the server by running node index.js. This will launch the server on port 1337 by default, or on the port you define in your .env file.

Creating the component

For the UI component, we are going to use the Search UI React library search-ui. We will create a custom component so that every time a user runs a search, it will send the top results to the LLM using the completion inference endpoint we created, and then display the answer back to the user.

There is a full tutorial about configuring your instance that you can find here. You can run search-ui in your computer, or work with our live sandbox here.

Once you have the example running and connected to your data, run the following installation step in your terminal within the starter app folder: yarn add axios antd html-react-parser

After installing that additional dependencies, create a new AiSummary.js file for the new component. This will include a simple prompt to give to the AI the instructions and rules.

Updating App.js

Now that we created our custom component, it's time to add it to the application. This is how your App.js should look like:

Note how in the connector instantiation we have overridden the default query to use a semantic query and leverage the semantic_text mappings we created.

Asking questions

Now it's time to test it. Ask any question about the documents you indexed, and above the search results, you should see a card with the AI Summary:

Conclusion

Re-designing your search experience is very important to keep your users engaged, and save them the time of going through the results to find the answers to their questions. With the Elastic open inference service, and search-ui is easier than ever to design this kind of experiences. Are you ready to try?

Adding AI summaries to your site with Elastic?

How to add an AI summary box along with the search results to enrich your search experience.

1

Create Endpoints

2

Create an index

3

Index data

4

Create the component

5

Ask questions