Filebeat quick start: installation and configuration

This guide describes how to get started quickly with log collection. You’ll learn how to:

- install Filebeat on each system you want to monitor

- specify the location of your log files

- parse log data into fields and send it to Elasticsearch

- visualize the log data in Kibana

You need Elasticsearch for storing and searching your data, and Kibana for visualizing and managing it.

To get started quickly, spin up an Elastic Cloud Hosted deployment. Elastic Cloud Hosted is available on AWS, GCP, and Azure. Try it out for free.

To install and run Elasticsearch and Kibana, see Installing the Elastic Stack.

Create an Elasticsearch Serverless project. Elasticsearch Serverless projects are available for Elasticsearch, Observability, and Security use cases.

Install Filebeat on all the servers you want to monitor.

To download and install Filebeat, use the commands that work with your system:

curl -L -O https://artifacts.elastic.co/downloads/beats/filebeat/filebeat-9.3.0-amd64.deb

sudo dpkg -i filebeat-9.3.0-amd64.deb

curl -L -O https://artifacts.elastic.co/downloads/beats/filebeat/filebeat-9.3.0-x86_64.rpm

sudo rpm -vi filebeat-9.3.0-x86_64.rpm

curl -L -O https://artifacts.elastic.co/downloads/beats/filebeat/filebeat-9.3.0-darwin-x86_64.tar.gz

tar xzvf filebeat-9.3.0-darwin-x86_64.tar.gz

curl -L -O https://artifacts.elastic.co/downloads/beats/filebeat/filebeat-9.3.0-linux-x86_64.tar.gz

tar xzvf filebeat-9.3.0-linux-x86_64.tar.gz

Download the Filebeat Windows zip file.

Extract the contents of the zip file into

C:\Program Files.Rename the

filebeat-[version]-windows-x86_64directory toFilebeat.Open a PowerShell prompt as an Administrator (right-click the PowerShell icon and select Run As Administrator).

From the PowerShell prompt, run the following commands to install Filebeat as a Windows service:

PS > cd 'C:\Program Files\Filebeat'

PS C:\Program Files\Filebeat> .\install-service-filebeat.ps1

If script execution is disabled on your system, you need to set the execution policy for the current session to allow the script to run. For example: PowerShell.exe -ExecutionPolicy UnRestricted -File .\install-service-filebeat.ps1.

The base folder has changed from C:\ProgramData\ to C:\Program Files\

because the latter has stricter permissions. The home path (base for

state and logs) is now C:\Program Files\Filebeat-Data.

The install script (install-service-filebeat.ps1) will check whether

C:\ProgramData\Filebeat exits and move it to C:\Program Files\Filebeat-Data.

For more details on the installation script refer to: install script.

The commands shown are for AMD platforms, but ARM packages are also available. Refer to the download page for the full list of available packages.

Connections to Elasticsearch and Kibana are required to set up Filebeat.

Set the connection information in filebeat.yml. To locate this configuration file, see Directory layout.

Specify the cloud.id of your Elastic Cloud Hosted deployment, and set cloud.auth to a user who is authorized to set up Filebeat. For example:

cloud.id: "staging:dXMtZWFzdC0xLmF3cy5mb3VuZC5pbyRjZWM2ZjI2MWE3NGJmMjRjZTMzYmI4ODExYjg0Mjk0ZiRjNmMyY2E2ZDA0MjI0OWFmMGNjN2Q3YTllOTYyNTc0Mw=="

cloud.auth: "filebeat_setup:YOUR_PASSWORD"

- This examples shows a hard-coded password, but you should store sensitive values in the secrets keystore.

Set the host and port where Filebeat can find the Elasticsearch installation, and set the username and password of a user who is authorized to set up Filebeat. For example:

output.elasticsearch: hosts: ["https://myEShost:9200"] username: "filebeat_internal" password: "YOUR_PASSWORD" ssl: enabled: true ca_trusted_fingerprint: "b9a10bbe64ee9826abeda6546fc988c8bf798b41957c33d05db736716513dc9c"- This example shows a hard-coded password, but you should store sensitive values in the secrets keystore.

- This example shows a hard-coded fingerprint, but you should store sensitive values in the secrets keystore. The fingerprint is a HEX encoded SHA-256 of a CA certificate, when you start Elasticsearch for the first time, security features such as network encryption (TLS) for Elasticsearch are enabled by default. If you are using the self-signed certificate generated by Elasticsearch when it is started for the first time, you will need to add its fingerprint here. The fingerprint is printed on Elasticsearch start up logs, or you can refer to connect clients to Elasticsearch documentation for other options on retrieving it. If you are providing your own SSL certificate to Elasticsearch refer to Filebeat documentation on how to setup SSL.

If you plan to use our pre-built Kibana dashboards, configure the Kibana endpoint. Skip this step if Kibana is running on the same host as Elasticsearch.

setup.kibana: host: "mykibanahost:5601" username: "my_kibana_user" password: "YOUR_PASSWORD"The hostname and port of the machine where Kibana is running, for example,

mykibanahost:5601. If you specify a path after the port number, include the scheme and port:http://mykibanahost:5601/path.The

usernameandpasswordsettings for Kibana are optional. If you don’t specify credentials for Kibana, Filebeat uses theusernameandpasswordspecified for the Elasticsearch output.To use the pre-built Kibana dashboards, this user must be authorized to view dashboards or have the

kibana_adminbuilt-in role.

Set the Elasticsearch endpoint and API key in your Beat configuration file. To find your project's endpoint and create an API key, refer to connection details. For example:

output.elasticsearch:

hosts: ["ELASTICSEARCH_ENDPOINT_URL"]

api_key: "YOUR_API_KEY"

- This example shows a hard-coded API key, but you should store sensitive values in the secrets keystore. Refer to Grant access using API keys for more on API key configuration.

Do not use cloud.id or cloud.auth for Elasticsearch Serverless projects. Those settings are for Elastic Cloud Hosted deployments only.

To learn more about required roles and privileges, see Grant users access to secured resources.

You can send data to other outputs, such as Logstash, but that requires additional configuration and setup.

There are several ways to collect log data with Filebeat:

- Data collection modules — simplify the collection, parsing, and visualization of common log formats

- ECS loggers — structure and format application logs into ECS-compatible JSON

- Manual Filebeat configuration

Identify the modules you need to enable. To see a list of available modules, run:

filebeat modules listfilebeat modules list./filebeat modules list./filebeat modules listPS > .\filebeat.exe modules listFrom the installation directory, enable one or more modules. For example, the following command enables the

nginxmodule config:filebeat modules enable nginxfilebeat modules enable nginx./filebeat modules enable nginx./filebeat modules enable nginxPS > .\filebeat.exe modules enable nginxIn the module config under modules.d, change the module settings to match your environment. You must enable at least one fileset in the module. Filesets are disabled by default.

For example, log locations are set based on the OS. If your logs aren’t in default locations, set the paths variable:

- module: nginx access: enabled: true var.paths: ["/var/log/nginx/access.log*"]

To see the full list of variables for a module, see the documentation under Modules.

To test your configuration file, change to the directory where the Filebeat binary is installed, and run Filebeat in the foreground with the following options specified: ./filebeat test config -e. Make sure your config files are in the path expected by Filebeat (see Directory layout), or use the -c flag to specify the path to the config file.

For more information about configuring Filebeat, also see:

- Configure Filebeat

- Config file format

filebeat.reference.yml: This reference configuration file shows all non-deprecated options. You'll find it in the same location asfilebeat.yml.

While Filebeat can be used to ingest raw, plain-text application logs, we recommend structuring your logs at ingest time. This lets you extract fields, like log level and exception stack traces.

Elastic simplifies this process by providing application log formatters in a variety of popular programming languages. These plugins format your logs into ECS-compatible JSON, which removes the need to manually parse logs.

See ECS loggers to get started.

If you're unable to find a module for your file type, or can't change your application's log output, see configure the input manually.

Filebeat comes with predefined assets for parsing, indexing, and visualizing your data. To load these assets:

Make sure the user specified in

filebeat.ymlis authorized to set up Filebeat.From the installation directory, run:

filebeat setup -efilebeat setup -e./filebeat setup -e./filebeat setup -ePS > .\filebeat.exe setup -e-eis optional and sends output to standard error instead of the configured log output.

By default Windows log files are stored in C:\Program Files\Filebeat-Data\logs.

In versions before 9.0.6, the default location for Windows log files was C:\ProgramData\filebeat\logs.

This step loads the recommended index template for writing to Elasticsearch and deploys the sample dashboards for visualizing the data in Kibana.

This step does not load the ingest pipelines used to parse log lines. By default, ingest pipelines are set up automatically the first time you run the module and connect to Elasticsearch.

A connection to Elasticsearch (or Elastic Cloud Hosted) is required to set up the initial environment. If you're using a different output, such as Logstash, see:

Filebeat should not be used to ingest its own log as this may lead to an infinite loop.

Before starting Filebeat, modify the user credentials in filebeat.yml and specify a user who is authorized to publish events.

To start Filebeat, run:

sudo service filebeat start

If you use an init.d script to start Filebeat, you can’t specify command line flags (see Command reference). To specify flags, start Filebeat in the foreground.

Also see Filebeat and systemd.

sudo service filebeat start

If you use an init.d script to start Filebeat, you can’t specify command line flags (see Command reference). To specify flags, start Filebeat in the foreground.

Also see Filebeat and systemd.

sudo chown root filebeat.yml

sudo chown root modules.d/nginx.yml

sudo ./filebeat -e

- You’ll be running Filebeat as root, so you need to change ownership of the configuration file and any configurations enabled in the

modules.ddirectory, or run Filebeat with--strict.perms=falsespecified. See Config File Ownership and Permissions.

sudo chown root filebeat.yml

sudo chown root modules.d/nginx.yml

sudo ./filebeat -e

- You’ll be running Filebeat as root, so you need to change ownership of the configuration file and any configurations enabled in the

modules.ddirectory, or run Filebeat with--strict.perms=falsespecified. See Config File Ownership and Permissions.

PS C:\Program Files\filebeat> Start-Service filebeat

By default Windows log files are stored in C:\Program Files\Filebeat-Data\logs.

In versions before 9.0.6, the default location for Windows log files was C:\ProgramData\filebeat\logs.

Filebeat should begin streaming events to Elasticsearch.

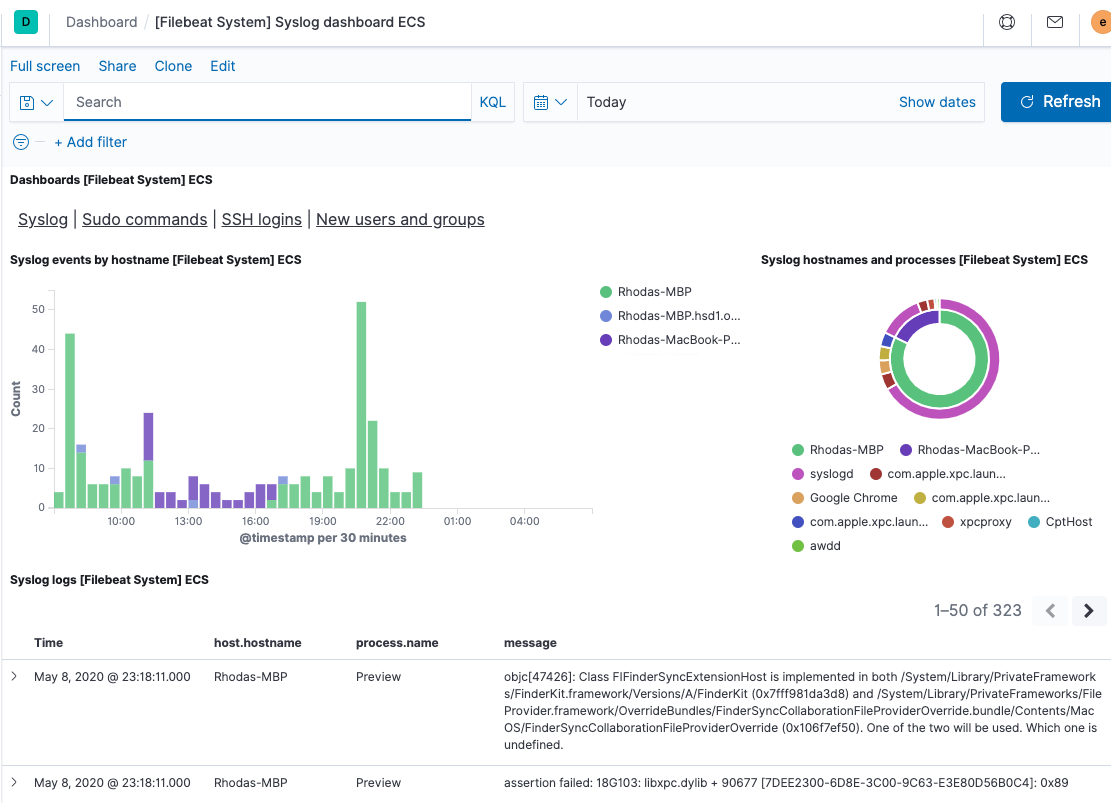

Filebeat comes with pre-built Kibana dashboards and UIs for visualizing log data. You loaded the dashboards earlier when you ran the setup command.

To open the dashboards:

Launch Kibana:

- Log in to your Elastic Cloud account.

- Navigate to the Kibana endpoint in your deployment.

Point your browser to http://localhost:5601, replacing

localhostwith the name of the Kibana host.Navigate to your Elasticsearch Serverless project in the Elastic Cloud console.

In the side navigation, click Discover. To see Filebeat data, make sure the predefined

filebeat-*data view is selected.TipIf you don’t see data in Kibana, try changing the time filter to a larger range. By default, Kibana shows the last 15 minutes.

In the side navigation, click Dashboard, then select the dashboard that you want to open.

The dashboards are provided as examples. We recommend that you customize them to meet your needs.

Now that you have your logs streaming into Elasticsearch, learn how to unify your logs, metrics, uptime, and application performance data.

Ingest data from other sources by installing and configuring other Elastic Beats:

Elastic Beats To capture Metricbeat Infrastructure metrics Winlogbeat Windows event logs Heartbeat Uptime information APM Application performance metrics Auditbeat Audit events Use the Observability apps in Kibana to search across all your data:

Elastic apps Use to Metrics app Explore metrics about systems and services across your ecosystem Logs app Tail related log data in real time Uptime app Monitor availability issues across your apps and services APM app Monitor application performance SIEM app Analyze security events