Get hands-on with Elasticsearch: Dive into our sample notebooks in the Elasticsearch Labs repo, start a free cloud trial, or try Elastic on your local machine now.

In the previous post, we discovered what LangChain4j is and how to:

- Have a discussion with LLMs by implementing a

ChatLanguageModeland aChatMemory - Retain chat history in memory to recall the context of a previous discussion with an LLM

This blog post is covering how to:

- Create vector embeddings from text examples

- Store vector embeddings in the Elasticsearch embedding store

- Search for similar vectors

Create embeddings

To create embeddings, we need to define an EmbeddingModel to use. For example, we can use the same mistral model we used in the previous post. It was running with ollama:

A model is able to generate vectors from text. Here we can check the number of dimensions generated by the model:

To generate vectors from a text, we can use:

Or if we also want to provide Metadata to allow us filtering on things like text, price, release date or whatever, we can use Metadata.from(). For example, we are adding here the game name as a metadata field:

If you'd like to run this code, please checkout the Step5EmbedddingsTest.java class.

Add Elasticsearch to store our vectors

LangChain4j provides an in-memory embedding store. This is useful to run simple tests:

But obviously, this could not work with much bigger dataset because this datastore stores everything in memory and we don't have infinite memory on our servers. So, we could instead store our embeddings into Elasticsearch which is by definition "elastic" and can scale up and out with your data. For that, let's add Elasticsearch to our project:

As you noticed, we also added the Elasticsearch TestContainers module to the project, so we can start an Elasticsearch instance from our tests:

To use Elasticsearch as an embedding store, you "just" have to switch from the LangChain4j in-memory datastore to the Elasticsearch datastore:

This will store your vectors in Elasticsearch in a default index. You can also change the index name to something more meaningful:

If you'd like to run this code, please checkout the Step6ElasticsearchEmbedddingsTest.java class.

Search for similar vectors

To search for similar vectors, we first need to transform our question into a vector representation using the same model we used previously. We already did that, so it's not hard to do this again. Note that we don't need the metadata in this case:

We can build a search request with this representation of our question and ask the embedding store to find the first top vectors:

We can iterate over the results now and print some information, like the game name which is coming from the metadata and the score:

As we could expect, this gives us "Out Run" as the first hit:

If you'd like to run this code, please checkout the Step7SearchForVectorsTest.java class.

Behind the scene

The default configuration for the Elasticsearch Embedding store is using the approximate kNN query behind the scene.

But this could be changed by providing another configuration (ElasticsearchConfigurationScript) than the default one (ElasticsearchConfigurationKnn) to the Embedding store:

The ElasticsearchConfigurationScript implementation runs behind the scene a script_score query using a cosineSimilarity function.

Basically, when calling:

This now calls:

In which case the result does not change in term of "order" but just the score is adjusted because the cosineSimilarity call does not use any approximation but compute the cosine for each of the matching vectors:

If you'd like to run this code, please checkout the Step7SearchForVectorsTest.java class.

Conclusion

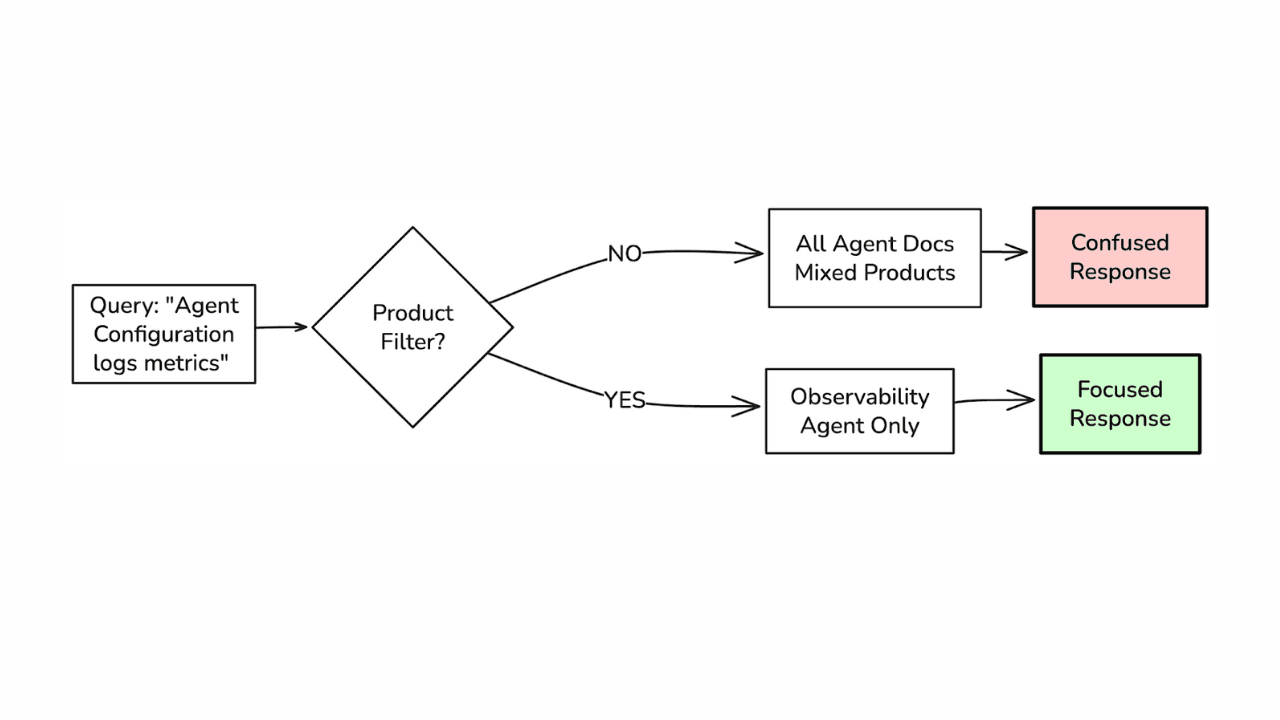

We have covered how easily you can generate embeddings from your text and how you can store and search for the closest neighbours in Elasticsearch using 2 different approaches:

- Using the approximate and fast

knnquery with the defaultElasticsearchConfigurationKnnoption - Using the exact but slower

script_scorequery with theElasticsearchConfigurationScriptoption

The next step will be about building a full RAG application, based on what we learned here.