From vector search to powerful REST APIs, Elasticsearch offers developers the most extensive search toolkit. Dive into our sample notebooks in the Elasticsearch Labs repo to try something new. You can also start your free trial or run Elasticsearch locally today.

Search is the process of locating the most relevant information based on your search query or combined queries and relevant search results are documents that best match these queries. Although there are several challenges and methods associated with search, the ultimate goal remains the same, to find the best possible answer to your question.

Considering this goal, in this blog post, we will explore different approaches to retrieving information using Elasticsearch, with a specific focus on text search: lexical and semantic search.

Prerequisites

To accomplish this, we will provide Python examples that demonstrate various search scenarios on a dataset generated to simulate e-commerce product information.

This dataset contains over 2,500 products, each with a description. These products are categorized into 76 distinct product categories, with each category containing a varying number of products, as shown below:

Treemap visualization - top 22 values of category.keyword (product categories)

For the setup you will need:

- Python 3.6 or later

- The Elastic Python client

- Elastic 8.8 deployment or later, with 8GB memory machine learning node

- The Elastic Learned Sparse EncodeR model that comes pre-loaded into Elastic installed and started on your deployment

We will be using Elastic Cloud, a free trial is available.

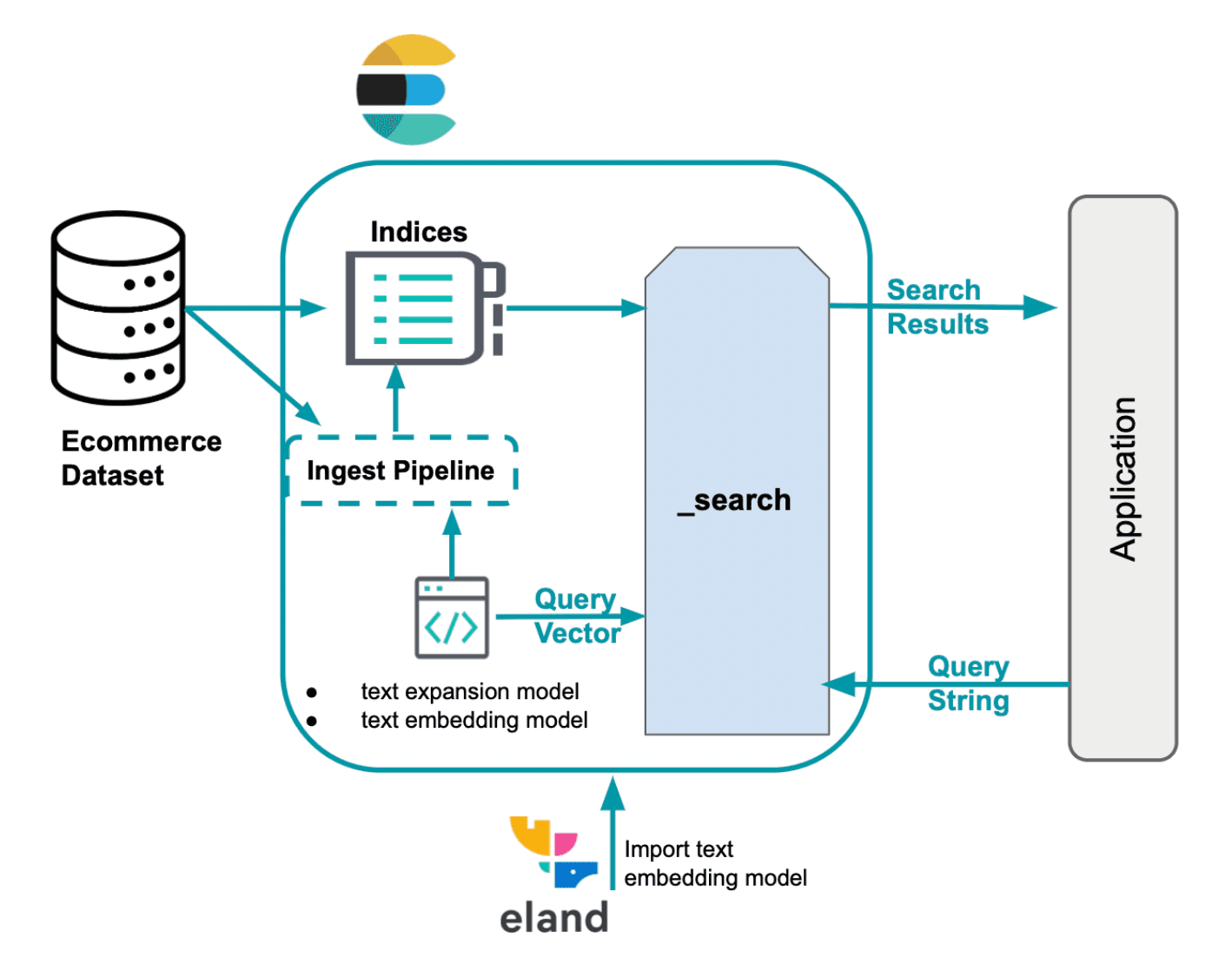

Besides the search queries provided in this blog post, a Python notebook will guide you through the following processes:

- Establish a connection to our Elastic deployment using the Python client

- Load a text embedding model into the Elasticsearch cluster

- Create an index with mappings for indexing feature vectors and dense vectors.

- Create an ingest pipeline with inference processors for text embedding and text expansion

Lexical search - sparse retrieval

The classic way documents are ranked for relevance by Elasticsearch based on a text query uses the Lucene implementation of the BM25 model, a sparse model for lexical search. This method follows the traditional approach for text search, looking for exact term matches.

To make this search possible, Elasticsearch converts text field data into a searchable format by performing text analysis.

Text analysis is performed by an analyzer, a set of rules to govern the process of extracting relevant tokens for searching. An analyzer must have exactly one tokenizer. The tokenizer receives a stream of characters and breaks it up into individual tokens (usually individual words), like in the example below:

String tokenization for lexical search

Output

In this example we are using the default analyzer, the standard analyzer, which works well for most use cases as it provides English grammar based tokenization. Tokenization enables matching on individual terms, but each token is still matched literally.

If you want to personalize your search experience you can choose a different built-in analyzer. Example, by updating the code to use the stop analyzer it will break the text into tokens at any non-letter character with support for removing stop words.

Output

When the built-in analyzers do not fulfill your needs, you can create a custom analyzer, which uses the appropriate combination of zero or more character filters, a tokenizer and zero or more token filters.

In the above example that combines a tokenizer and token filters, the text will be lowercased by the lowercase filter before being processed by the synonyms token filter.

Lexical matching

BM25 will measure the relevance of documents to a given search query based on the frequency of terms and its importance.

The code below performs a match query, searching for up to two documents considering "description" field values from the "ecommerce-search" index and the search query "Comfortable furniture for a large balcony".

Refining the criteria for a document to be considered a match for this query can improve the precision. However, more specific results come at the cost of a lower tolerance for variations.

Output

By analyzing the output, the most relevant result is the "Barbie Dreamhouse" product, in the "Toys" category, and its description is highly relevant as it includes the terms "furniture", "large" and "balcony", this is the only product with 3 terms in the description that match the search query, the product is also the only one with the term "balcony" in the description.

The second most relevant product is a "Comfortable Rocking Chair" categorized as "Indoor Furniture" and its description includes the terms "comfortable" and "furniture". Only 3 products in the dataset match at least 2 terms of this search query, this product is one of them.

"Comfortable" appears in the description of 105 products and "furniture" in the description of 4 products with 4 different categories: Toys, Indoor Furniture, Outdoor Furniture and 'Dog and Cat Supplies & Toys'.

As you could see, the most relevant product considering the query is a toy and the second most relevant product is indoor furniture. If you want detailed information about the score computation to know why these documents are a match, you can set the explain __query parameter to true.

Despite both results being the most relevant ones, considering both the number of documents and the occurrence of terms in this dataset, the intention behind the query "Comfortable furniture for a large balcony" is to search for furniture for an actual large balcony, excluding among others, toys and indoor furniture.

Lexical search is relatively simple and fast, but it has limitations since it is not always possible to know all the possible terms and synonyms without necessarily knowing the user's intention and queries. A common phenomenon in the usage of natural language is vocabulary mismatch. Research shows that, on average, 80% of the time different people (experts in the same field) will name the same thing differently.

These limitations motivate us to look for other scoring models that incorporate semantic knowledge. Transformer-based models, which excel at processing sequential input tokens like natural language, capture the underlying meaning of your search by considering mathematical representations of both documents and queries. This allows for a dense, context aware vector representation of text, powering Semantic Search, a refined way to find relevant content.

Semantic search - dense retrieval

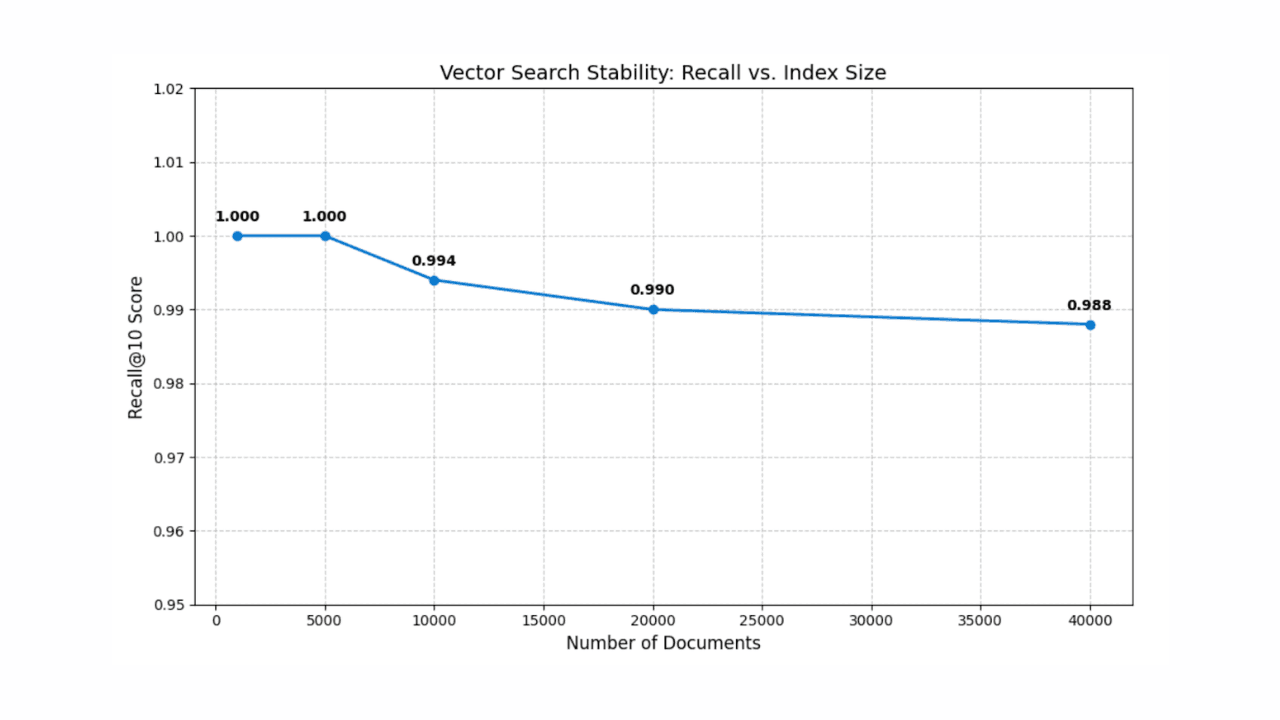

In this context, after converting your data into meaningful vector values, k-nearest neighbor (kNN) search algorithm is utilized to find vector representations in a dataset that are most similar to a query vector. Elasticsearch supports two methods for kNN search, exact brute-force kNN and approximate kNN, also known as ANN.

Brute-force kNN guarantees accurate results but doesn't scale well with large datasets. Approximate kNN efficiently finds approximate nearest neighbors by sacrificing some accuracy for improved performance.

With Lucene's support for kNN search and dense vector indexes, Elasticsearch takes advantage of the Hierarchical Navigable Small World (HNSW) algorithm, which demonstrates strong search performance across a variety of ann-benchmark datasets. An approximate kNN search can be performed in Python using the below example code.

Semantic search with approximate kNN

This code block uses Elasticsearch's kNN to return up to two products with a description similar to the vectorized query (query_vector_build) of "Comfortable furniture for a large balcony" considering the embeddings of the “description” field in the products dataset.

The products embeddings were previously generated in an ingest pipeline with an inference processor containing the "all-mpnet-base-v2" text embedding model to infer against data that was being ingested in the pipeline.

This model was chosen based on the evaluation of pretrained models using "sentence_transformers.evaluation" where different classes are used to assess a model during training. The "all-mpnet-base-v2" model demonstrated the best average performance according to the Sentence-Transformers ranking and also secured a favorable position on the Massive Text Embedding Benchmark (MTEB) Leaderboard. The model pre-trained microsoft/mpnet-base model and fine-tuned on a 1B sentence pairs dataset, it maps sentences to a 768 dimensional dense vector space.

Alternatively, there are many other models available that can be used, especially those fine-tuned for your domain-specific data.

Output

The output may vary based on the chosen model, filters and approximate kNN tune.

The kNN search results are both in the "Outdoor Furniture" category, even though the word "outdoor" was not explicitly mentioned as part of the query, which highlights the importance of semantics understanding in the context.

Dense vector search offers several advantages:

- Enabling semantic search

- Scalability to handle very large datasets

- Flexibility to handle a wide range of data types

However, dense vector search also comes with its own challenges:

- Selecting the right embedding model for your use case

- Once a model is chosen, fine-tuning the model to optimize performance on a domain-specific dataset might be necessary, a process that demands the involvement of domain experts

- Additionally, indexing high-dimensional vectors can be computationally expensive

Semantic search - learned sparse retrieval

Let’s explore an alternative approach: learned sparse retrieval, another way to perform semantic search.

As a sparse model, it utilizes Elasticsearch's Lucene-based inverted index, which benefits from decades of optimizations. However, this approach goes beyond simply adding synonyms with lexical scoring functions like BM25. Instead, it incorporates learned associations using a deeper language-scale knowledge to optimize for relevance.

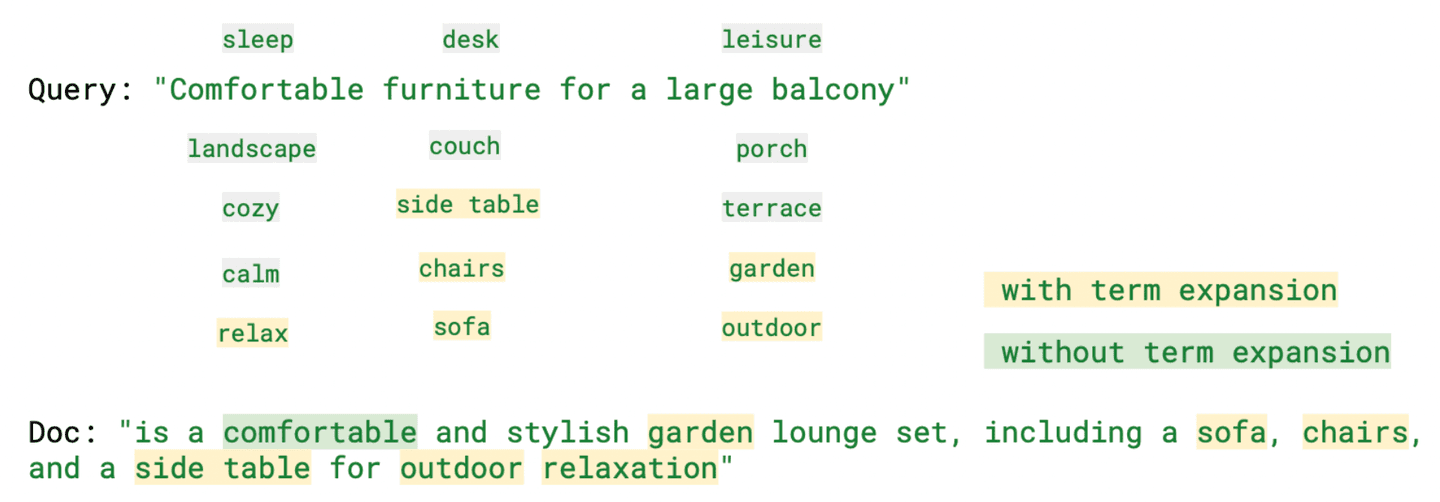

By expanding search queries to include relevant terms that are not present in the original query, the Elastic Learned Sparse Encoder improves sparse vector embeddings, as you can see in the example below.

Sparse vector search with Elastic Learned Sparse Encoder

Output

The results in this case include the "Garden Furniture" category, which offers products quite similar to "Outdoor Furniture".

By analyzing "ml.tokens", the "rank_features" field containing Learned Sparse Retrieval generated tokens, it becomes apparent that among the various tokens generated there are terms that, while not part of the search query, are still relevant in meaning, such as "relax" (comfortable), "sofa" (furniture) and "outdoor" (balcony).

The image below highlights some of these terms alongside the query, both with and without term expansion.

As observed, this model provides a context-aware search and helps mitigate the vocabulary mismatch problem while providing more interpretable results. It can even outperform dense vector models when no domain-specific retraining is applied.

Hybrid search: relevant results by combining lexical and semantic search

When it comes to search, there is no universal solution. Each of these retrieval methods has its strengths but also its challenges. Depending on the use case, the best option may change. Often the best results across retrieval methods can be complementary. Hence, to improve relevance, we’ll look at combining the strengths of each method.

There are multiple ways to implement a hybrid search, including linear combination, giving a weight to each score and reciprocal rank fusion (RRF), where specifying a weight is not necessary.

Elasticsearch: best of both worlds with lexical and semantic search

In this code, we performed a hybrid search with two queries having the value "A dining table and comfortable chairs for a large balcony". Instead of using "furniture" as a search term, we are specifying what we are looking for, and both searches are considering the same field values, "description". The ranking is determined by a linear combination with equal weight for the BM25 and ELSER scores.

Output

In the code below, we will use the same value for the query, but combine the scores from BM25 (query parameter) and kNN (knn parameter) using the reciprocal rank fusion method to combine and rank the documents.

RRF functionality is in technical preview. The syntax will likely change before GA.

Output

Here we could also use different fields and values; some of these examples are available in the Python notebook.

As you can see, with Elasticsearch you have the best of both worlds: the traditional lexical search and vector search, whether sparse or dense, to reach your goal and find the best possible answer to your question.

If you want to continue learning about the approaches mentioned here, these blogs can be useful:

- Improving information retrieval in the Elastic Stack: Hybrid retrieval

- Vector search in Elasticsearch: The rationale behind the design

- How to get the best of lexical and AI-powered search with Elastic’s vector database

- Introducing Elastic Learned Sparse Encoder: Elastic’s AI model for semantic search

- Improving information retrieval in the Elastic Stack: Introducing Elastic Learned Sparse Encoder, our new retrieval model

Elasticsearch provides a vector database, along with all the tools you need to build vector search:

- Elasticsearch vector database

- Vector search use cases with Elastic

Conclusion

In this blog post, we explored various approaches to retrieving information using Elasticsearch, focusing specifically on text, lexical and semantic search. To demonstrate this, we provided Python examples showcasing different search scenarios using a dataset containing e-commerce product information.

We reviewed the classic lexical search with BM25 and discussed its benefits and challenges, such as vocabulary mismatch. We emphasized the importance of incorporating semantic knowledge to overcome this issue. Additionally, we discussed dense vector search, which enables semantic search, and covered the challenges associated with this retrieval method, including the computational cost when indexing high-dimensional vectors.

On the other hand, we mentioned that sparse vectors compress exceptionally well. Thus, we discussed Elastic's Learned Sparse Encoder, which expands search queries to include relevant terms not present in the original query.

There is no one-size-fits-all solution when it comes to search. Each retrieval method has its strengths and challenges. Therefore, we also discussed the concept of hybrid search.

As you could see, with Elasticsearch, you can have the best of both worlds: traditional lexical search and vector search!

Ready to get started? Check the available Python notebook and begin a free trial of Elastic Cloud.