- Elasticsearch Guide: other versions:

- What is Elasticsearch?

- What’s new in 8.8

- Set up Elasticsearch

- Installing Elasticsearch

- Run Elasticsearch locally

- Configuring Elasticsearch

- Important Elasticsearch configuration

- Secure settings

- Auditing settings

- Circuit breaker settings

- Cluster-level shard allocation and routing settings

- Miscellaneous cluster settings

- Cross-cluster replication settings

- Discovery and cluster formation settings

- Field data cache settings

- Health Diagnostic settings

- Index lifecycle management settings

- Index management settings

- Index recovery settings

- Indexing buffer settings

- License settings

- Local gateway settings

- Logging

- Machine learning settings

- Monitoring settings

- Node

- Networking

- Node query cache settings

- Search settings

- Security settings

- Shard request cache settings

- Snapshot and restore settings

- Transforms settings

- Thread pools

- Watcher settings

- Advanced configuration

- Important system configuration

- Bootstrap Checks

- Heap size check

- File descriptor check

- Memory lock check

- Maximum number of threads check

- Max file size check

- Maximum size virtual memory check

- Maximum map count check

- Client JVM check

- Use serial collector check

- System call filter check

- OnError and OnOutOfMemoryError checks

- Early-access check

- G1GC check

- All permission check

- Discovery configuration check

- Bootstrap Checks for X-Pack

- Starting Elasticsearch

- Stopping Elasticsearch

- Discovery and cluster formation

- Add and remove nodes in your cluster

- Full-cluster restart and rolling restart

- Remote clusters

- Plugins

- Upgrade Elasticsearch

- Index modules

- Mapping

- Text analysis

- Overview

- Concepts

- Configure text analysis

- Built-in analyzer reference

- Tokenizer reference

- Token filter reference

- Apostrophe

- ASCII folding

- CJK bigram

- CJK width

- Classic

- Common grams

- Conditional

- Decimal digit

- Delimited payload

- Dictionary decompounder

- Edge n-gram

- Elision

- Fingerprint

- Flatten graph

- Hunspell

- Hyphenation decompounder

- Keep types

- Keep words

- Keyword marker

- Keyword repeat

- KStem

- Length

- Limit token count

- Lowercase

- MinHash

- Multiplexer

- N-gram

- Normalization

- Pattern capture

- Pattern replace

- Phonetic

- Porter stem

- Predicate script

- Remove duplicates

- Reverse

- Shingle

- Snowball

- Stemmer

- Stemmer override

- Stop

- Synonym

- Synonym graph

- Trim

- Truncate

- Unique

- Uppercase

- Word delimiter

- Word delimiter graph

- Character filters reference

- Normalizers

- Index templates

- Data streams

- Ingest pipelines

- Example: Parse logs

- Enrich your data

- Processor reference

- Append

- Attachment

- Bytes

- Circle

- Community ID

- Convert

- CSV

- Date

- Date index name

- Dissect

- Dot expander

- Drop

- Enrich

- Fail

- Fingerprint

- Foreach

- Geo-grid

- GeoIP

- Grok

- Gsub

- HTML strip

- Inference

- Join

- JSON

- KV

- Lowercase

- Network direction

- Pipeline

- Redact

- Registered domain

- Remove

- Rename

- Reroute

- Script

- Set

- Set security user

- Sort

- Split

- Trim

- Uppercase

- URL decode

- URI parts

- User agent

- Aliases

- Search your data

- Collapse search results

- Filter search results

- Highlighting

- Long-running searches

- Near real-time search

- Paginate search results

- Retrieve inner hits

- Retrieve selected fields

- Search across clusters

- Search multiple data streams and indices

- Search shard routing

- Search templates

- Sort search results

- kNN search

- Semantic search with ELSER

- Query DSL

- Aggregations

- Bucket aggregations

- Adjacency matrix

- Auto-interval date histogram

- Categorize text

- Children

- Composite

- Date histogram

- Date range

- Diversified sampler

- Filter

- Filters

- Frequent item sets

- Geo-distance

- Geohash grid

- Geohex grid

- Geotile grid

- Global

- Histogram

- IP prefix

- IP range

- Missing

- Multi Terms

- Nested

- Parent

- Random sampler

- Range

- Rare terms

- Reverse nested

- Sampler

- Significant terms

- Significant text

- Terms

- Variable width histogram

- Subtleties of bucketing range fields

- Metrics aggregations

- Pipeline aggregations

- Average bucket

- Bucket script

- Bucket count K-S test

- Bucket correlation

- Bucket selector

- Bucket sort

- Change point

- Cumulative cardinality

- Cumulative sum

- Derivative

- Extended stats bucket

- Inference bucket

- Max bucket

- Min bucket

- Moving function

- Moving percentiles

- Normalize

- Percentiles bucket

- Serial differencing

- Stats bucket

- Sum bucket

- Bucket aggregations

- Geospatial analysis

- EQL

- SQL

- Overview

- Getting Started with SQL

- Conventions and Terminology

- Security

- SQL REST API

- SQL Translate API

- SQL CLI

- SQL JDBC

- SQL ODBC

- SQL Client Applications

- SQL Language

- Functions and Operators

- Comparison Operators

- Logical Operators

- Math Operators

- Cast Operators

- LIKE and RLIKE Operators

- Aggregate Functions

- Grouping Functions

- Date/Time and Interval Functions and Operators

- Full-Text Search Functions

- Mathematical Functions

- String Functions

- Type Conversion Functions

- Geo Functions

- Conditional Functions And Expressions

- System Functions

- Reserved keywords

- SQL Limitations

- Scripting

- Data management

- ILM: Manage the index lifecycle

- Tutorial: Customize built-in policies

- Tutorial: Automate rollover

- Index management in Kibana

- Overview

- Concepts

- Index lifecycle actions

- Configure a lifecycle policy

- Migrate index allocation filters to node roles

- Troubleshooting index lifecycle management errors

- Start and stop index lifecycle management

- Manage existing indices

- Skip rollover

- Restore a managed data stream or index

- Data tiers

- Autoscaling

- Monitor a cluster

- Roll up or transform your data

- Set up a cluster for high availability

- Snapshot and restore

- Secure the Elastic Stack

- Elasticsearch security principles

- Start the Elastic Stack with security enabled automatically

- Manually configure security

- Updating node security certificates

- User authentication

- Built-in users

- Service accounts

- Internal users

- Token-based authentication services

- User profiles

- Realms

- Realm chains

- Security domains

- Active Directory user authentication

- File-based user authentication

- LDAP user authentication

- Native user authentication

- OpenID Connect authentication

- PKI user authentication

- SAML authentication

- Kerberos authentication

- JWT authentication

- Integrating with other authentication systems

- Enabling anonymous access

- Looking up users without authentication

- Controlling the user cache

- Configuring SAML single-sign-on on the Elastic Stack

- Configuring single sign-on to the Elastic Stack using OpenID Connect

- User authorization

- Built-in roles

- Defining roles

- Security privileges

- Document level security

- Field level security

- Granting privileges for data streams and aliases

- Mapping users and groups to roles

- Setting up field and document level security

- Submitting requests on behalf of other users

- Configuring authorization delegation

- Customizing roles and authorization

- Enable audit logging

- Restricting connections with IP filtering

- Securing clients and integrations

- Operator privileges

- Troubleshooting

- Some settings are not returned via the nodes settings API

- Authorization exceptions

- Users command fails due to extra arguments

- Users are frequently locked out of Active Directory

- Certificate verification fails for curl on Mac

- SSLHandshakeException causes connections to fail

- Common SSL/TLS exceptions

- Common Kerberos exceptions

- Common SAML issues

- Internal Server Error in Kibana

- Setup-passwords command fails due to connection failure

- Failures due to relocation of the configuration files

- Limitations

- Watcher

- Command line tools

- elasticsearch-certgen

- elasticsearch-certutil

- elasticsearch-create-enrollment-token

- elasticsearch-croneval

- elasticsearch-keystore

- elasticsearch-node

- elasticsearch-reconfigure-node

- elasticsearch-reset-password

- elasticsearch-saml-metadata

- elasticsearch-service-tokens

- elasticsearch-setup-passwords

- elasticsearch-shard

- elasticsearch-syskeygen

- elasticsearch-users

- How to

- Troubleshooting

- Fix common cluster issues

- Diagnose unassigned shards

- Add a missing tier to the system

- Allow Elasticsearch to allocate the data in the system

- Allow Elasticsearch to allocate the index

- Indices mix index allocation filters with data tiers node roles to move through data tiers

- Not enough nodes to allocate all shard replicas

- Total number of shards for an index on a single node exceeded

- Total number of shards per node has been reached

- Troubleshooting corruption

- Fix data nodes out of disk

- Fix master nodes out of disk

- Fix other role nodes out of disk

- Start index lifecycle management

- Start Snapshot Lifecycle Management

- Restore from snapshot

- Multiple deployments writing to the same snapshot repository

- Addressing repeated snapshot policy failures

- Troubleshooting discovery

- Troubleshooting monitoring

- Troubleshooting transforms

- Troubleshooting Watcher

- Troubleshooting searches

- Troubleshooting shards capacity health issues

- REST APIs

- API conventions

- Common options

- REST API compatibility

- Autoscaling APIs

- Behavioral Analytics APIs

- Compact and aligned text (CAT) APIs

- cat aliases

- cat allocation

- cat anomaly detectors

- cat component templates

- cat count

- cat data frame analytics

- cat datafeeds

- cat fielddata

- cat health

- cat indices

- cat master

- cat nodeattrs

- cat nodes

- cat pending tasks

- cat plugins

- cat recovery

- cat repositories

- cat segments

- cat shards

- cat snapshots

- cat task management

- cat templates

- cat thread pool

- cat trained model

- cat transforms

- Cluster APIs

- Cluster allocation explain

- Cluster get settings

- Cluster health

- Health

- Cluster reroute

- Cluster state

- Cluster stats

- Cluster update settings

- Nodes feature usage

- Nodes hot threads

- Nodes info

- Prevalidate node removal

- Nodes reload secure settings

- Nodes stats

- Pending cluster tasks

- Remote cluster info

- Task management

- Voting configuration exclusions

- Create or update desired nodes

- Get desired nodes

- Delete desired nodes

- Get desired balance

- Delete/reset desired balance

- Cross-cluster replication APIs

- Data stream APIs

- Document APIs

- Enrich APIs

- EQL APIs

- Features APIs

- Fleet APIs

- Find structure API

- Graph explore API

- Index APIs

- Alias exists

- Aliases

- Analyze

- Analyze index disk usage

- Clear cache

- Clone index

- Close index

- Create index

- Create or update alias

- Create or update component template

- Create or update index template

- Create or update index template (legacy)

- Delete component template

- Delete dangling index

- Delete alias

- Delete index

- Delete index template

- Delete index template (legacy)

- Exists

- Field usage stats

- Flush

- Force merge

- Get alias

- Get component template

- Get field mapping

- Get index

- Get index settings

- Get index template

- Get index template (legacy)

- Get mapping

- Import dangling index

- Index recovery

- Index segments

- Index shard stores

- Index stats

- Index template exists (legacy)

- List dangling indices

- Open index

- Refresh

- Resolve index

- Rollover

- Shrink index

- Simulate index

- Simulate template

- Split index

- Unfreeze index

- Update index settings

- Update mapping

- Index lifecycle management APIs

- Create or update lifecycle policy

- Get policy

- Delete policy

- Move to step

- Remove policy

- Retry policy

- Get index lifecycle management status

- Explain lifecycle

- Start index lifecycle management

- Stop index lifecycle management

- Migrate indices, ILM policies, and legacy, composable and component templates to data tiers routing

- Ingest APIs

- Info API

- Licensing APIs

- Logstash APIs

- Machine learning APIs

- Machine learning anomaly detection APIs

- Add events to calendar

- Add jobs to calendar

- Close jobs

- Create jobs

- Create calendars

- Create datafeeds

- Create filters

- Delete calendars

- Delete datafeeds

- Delete events from calendar

- Delete filters

- Delete forecasts

- Delete jobs

- Delete jobs from calendar

- Delete model snapshots

- Delete expired data

- Estimate model memory

- Flush jobs

- Forecast jobs

- Get buckets

- Get calendars

- Get categories

- Get datafeeds

- Get datafeed statistics

- Get influencers

- Get jobs

- Get job statistics

- Get model snapshots

- Get model snapshot upgrade statistics

- Get overall buckets

- Get scheduled events

- Get filters

- Get records

- Open jobs

- Post data to jobs

- Preview datafeeds

- Reset jobs

- Revert model snapshots

- Start datafeeds

- Stop datafeeds

- Update datafeeds

- Update filters

- Update jobs

- Update model snapshots

- Upgrade model snapshots

- Machine learning data frame analytics APIs

- Create data frame analytics jobs

- Delete data frame analytics jobs

- Evaluate data frame analytics

- Explain data frame analytics

- Get data frame analytics jobs

- Get data frame analytics jobs stats

- Preview data frame analytics

- Start data frame analytics jobs

- Stop data frame analytics jobs

- Update data frame analytics jobs

- Machine learning trained model APIs

- Clear trained model deployment cache

- Create or update trained model aliases

- Create part of a trained model

- Create trained models

- Create trained model vocabulary

- Delete trained model aliases

- Delete trained models

- Get trained models

- Get trained models stats

- Infer trained model

- Start trained model deployment

- Stop trained model deployment

- Update trained model deployment

- Migration APIs

- Node lifecycle APIs

- Reload search analyzers API

- Repositories metering APIs

- Rollup APIs

- Script APIs

- Search APIs

- Search Application APIs

- Searchable snapshots APIs

- Security APIs

- Authenticate

- Change passwords

- Clear cache

- Clear roles cache

- Clear privileges cache

- Clear API key cache

- Clear service account token caches

- Create API keys

- Create or update application privileges

- Create or update role mappings

- Create or update roles

- Create or update users

- Create service account tokens

- Delegate PKI authentication

- Delete application privileges

- Delete role mappings

- Delete roles

- Delete service account token

- Delete users

- Disable users

- Enable users

- Enroll Kibana

- Enroll node

- Get API key information

- Get application privileges

- Get builtin privileges

- Get role mappings

- Get roles

- Get service accounts

- Get service account credentials

- Get token

- Get user privileges

- Get users

- Grant API keys

- Has privileges

- Invalidate API key

- Invalidate token

- OpenID Connect prepare authentication

- OpenID Connect authenticate

- OpenID Connect logout

- Query API key information

- Update API key

- Bulk update API keys

- SAML prepare authentication

- SAML authenticate

- SAML logout

- SAML invalidate

- SAML complete logout

- SAML service provider metadata

- SSL certificate

- Activate user profile

- Disable user profile

- Enable user profile

- Get user profiles

- Suggest user profile

- Update user profile data

- Has privileges user profile

- Snapshot and restore APIs

- Snapshot lifecycle management APIs

- SQL APIs

- Transform APIs

- Usage API

- Watcher APIs

- Definitions

- Data Lifecycle Management APIs

- Migration guide

- Release notes

- Elasticsearch version 8.8.2

- Elasticsearch version 8.8.1

- Elasticsearch version 8.8.0

- Elasticsearch version 8.7.1

- Elasticsearch version 8.7.0

- Elasticsearch version 8.6.2

- Elasticsearch version 8.6.1

- Elasticsearch version 8.6.0

- Elasticsearch version 8.5.3

- Elasticsearch version 8.5.2

- Elasticsearch version 8.5.1

- Elasticsearch version 8.5.0

- Elasticsearch version 8.4.3

- Elasticsearch version 8.4.2

- Elasticsearch version 8.4.1

- Elasticsearch version 8.4.0

- Elasticsearch version 8.3.3

- Elasticsearch version 8.3.2

- Elasticsearch version 8.3.1

- Elasticsearch version 8.3.0

- Elasticsearch version 8.2.3

- Elasticsearch version 8.2.2

- Elasticsearch version 8.2.1

- Elasticsearch version 8.2.0

- Elasticsearch version 8.1.3

- Elasticsearch version 8.1.2

- Elasticsearch version 8.1.1

- Elasticsearch version 8.1.0

- Elasticsearch version 8.0.1

- Elasticsearch version 8.0.0

- Elasticsearch version 8.0.0-rc2

- Elasticsearch version 8.0.0-rc1

- Elasticsearch version 8.0.0-beta1

- Elasticsearch version 8.0.0-alpha2

- Elasticsearch version 8.0.0-alpha1

- Dependencies and versions

Add and remove nodes in your cluster

editAdd and remove nodes in your cluster

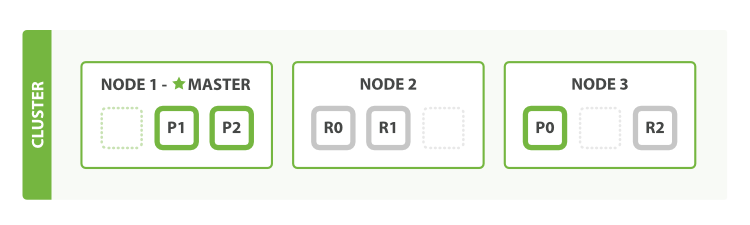

editWhen you start an instance of Elasticsearch, you are starting a node. An Elasticsearch cluster

is a group of nodes that have the same cluster.name attribute. As nodes join

or leave a cluster, the cluster automatically reorganizes itself to evenly

distribute the data across the available nodes.

If you are running a single instance of Elasticsearch, you have a cluster of one node. All primary shards reside on the single node. No replica shards can be allocated, therefore the cluster state remains yellow. The cluster is fully functional but is at risk of data loss in the event of a failure.

You add nodes to a cluster to increase its capacity and reliability. By default, a node is both a data node and eligible to be elected as the master node that controls the cluster. You can also configure a new node for a specific purpose, such as handling ingest requests. For more information, see Nodes.

When you add more nodes to a cluster, it automatically allocates replica shards. When all primary and replica shards are active, the cluster state changes to green.

Enroll nodes in an existing cluster

editYou can enroll additional nodes on your local machine to experiment with how an Elasticsearch cluster with multiple nodes behaves.

To add a node to a cluster running on multiple machines, you must also set

discovery.seed_hosts so that the new node can discover

the rest of its cluster.

When Elasticsearch starts for the first time, the security auto-configuration process

binds the HTTP layer to 0.0.0.0, but only binds the transport layer to

localhost. This intended behavior ensures that you can start

a single-node cluster with security enabled by default without any additional

configuration.

Before enrolling a new node, additional actions such as binding to an address

other than localhost or satisfying bootstrap checks are typically necessary

in production clusters. During that time, an auto-generated enrollment token

could expire, which is why enrollment tokens aren’t generated automatically.

Additionally, only nodes on the same host can join the cluster without

additional configuration. If you want nodes from another host to join your

cluster, you need to set transport.host to a

supported value

(such as uncommenting the suggested value of 0.0.0.0), or an IP address

that’s bound to an interface where other hosts can reach it. Refer to

transport settings for more

information.

To enroll new nodes in your cluster, create an enrollment token with the

elasticsearch-create-enrollment-token tool on any existing node in your

cluster. You can then start a new node with the --enrollment-token parameter

so that it joins an existing cluster.

-

In a separate terminal from where Elasticsearch is running, navigate to the directory where you installed Elasticsearch and run the

elasticsearch-create-enrollment-tokentool to generate an enrollment token for your new nodes.bin\elasticsearch-create-enrollment-token -s node

Copy the enrollment token, which you’ll use to enroll new nodes with your Elasticsearch cluster.

-

From the installation directory of your new node, start Elasticsearch and pass the enrollment token with the

--enrollment-tokenparameter.bin\elasticsearch --enrollment-token <enrollment-token>

Elasticsearch automatically generates certificates and keys in the following directory:

config\certs - Repeat the previous step for any new nodes that you want to enroll.

For more information about discovery and shard allocation, refer to Discovery and cluster formation and Cluster-level shard allocation and routing settings.

Master-eligible nodes

editAs nodes are added or removed Elasticsearch maintains an optimal level of fault tolerance by automatically updating the cluster’s voting configuration, which is the set of master-eligible nodes whose responses are counted when making decisions such as electing a new master or committing a new cluster state.

It is recommended to have a small and fixed number of master-eligible nodes in a cluster, and to scale the cluster up and down by adding and removing master-ineligible nodes only. However there are situations in which it may be desirable to add or remove some master-eligible nodes to or from a cluster.

Adding master-eligible nodes

editIf you wish to add some nodes to your cluster, simply configure the new nodes to find the existing cluster and start them up. Elasticsearch adds the new nodes to the voting configuration if it is appropriate to do so.

During master election or when joining an existing formed cluster, a node sends a join request to the master in order to be officially added to the cluster.

Removing master-eligible nodes

editWhen removing master-eligible nodes, it is important not to remove too many all at the same time. For instance, if there are currently seven master-eligible nodes and you wish to reduce this to three, it is not possible simply to stop four of the nodes at once: to do so would leave only three nodes remaining, which is less than half of the voting configuration, which means the cluster cannot take any further actions.

More precisely, if you shut down half or more of the master-eligible nodes all at the same time then the cluster will normally become unavailable. If this happens then you can bring the cluster back online by starting the removed nodes again.

As long as there are at least three master-eligible nodes in the cluster, as a general rule it is best to remove nodes one-at-a-time, allowing enough time for the cluster to automatically adjust the voting configuration and adapt the fault tolerance level to the new set of nodes.

If there are only two master-eligible nodes remaining then neither node can be safely removed since both are required to reliably make progress. To remove one of these nodes you must first inform Elasticsearch that it should not be part of the voting configuration, and that the voting power should instead be given to the other node. You can then take the excluded node offline without preventing the other node from making progress. A node which is added to a voting configuration exclusion list still works normally, but Elasticsearch tries to remove it from the voting configuration so its vote is no longer required. Importantly, Elasticsearch will never automatically move a node on the voting exclusions list back into the voting configuration. Once an excluded node has been successfully auto-reconfigured out of the voting configuration, it is safe to shut it down without affecting the cluster’s master-level availability. A node can be added to the voting configuration exclusion list using the Voting configuration exclusions API. For example:

# Add node to voting configuration exclusions list and wait for the system # to auto-reconfigure the node out of the voting configuration up to the # default timeout of 30 seconds POST /_cluster/voting_config_exclusions?node_names=node_name # Add node to voting configuration exclusions list and wait for # auto-reconfiguration up to one minute POST /_cluster/voting_config_exclusions?node_names=node_name&timeout=1m

The nodes that should be added to the exclusions list are specified by name

using the ?node_names query parameter, or by their persistent node IDs using

the ?node_ids query parameter. If a call to the voting configuration

exclusions API fails, you can safely retry it. Only a successful response

guarantees that the node has actually been removed from the voting configuration

and will not be reinstated. If the elected master node is excluded from the

voting configuration then it will abdicate to another master-eligible node that

is still in the voting configuration if such a node is available.

Although the voting configuration exclusions API is most useful for down-scaling a two-node to a one-node cluster, it is also possible to use it to remove multiple master-eligible nodes all at the same time. Adding multiple nodes to the exclusions list has the system try to auto-reconfigure all of these nodes out of the voting configuration, allowing them to be safely shut down while keeping the cluster available. In the example described above, shrinking a seven-master-node cluster down to only have three master nodes, you could add four nodes to the exclusions list, wait for confirmation, and then shut them down simultaneously.

Voting exclusions are only required when removing at least half of the master-eligible nodes from a cluster in a short time period. They are not required when removing master-ineligible nodes, nor are they required when removing fewer than half of the master-eligible nodes.

Adding an exclusion for a node creates an entry for that node in the voting configuration exclusions list, which has the system automatically try to reconfigure the voting configuration to remove that node and prevents it from returning to the voting configuration once it has removed. The current list of exclusions is stored in the cluster state and can be inspected as follows:

response = client.cluster.state( filter_path: 'metadata.cluster_coordination.voting_config_exclusions' ) puts response

GET /_cluster/state?filter_path=metadata.cluster_coordination.voting_config_exclusions

This list is limited in size by the cluster.max_voting_config_exclusions

setting, which defaults to 10. See Discovery and cluster formation settings. Since

voting configuration exclusions are persistent and limited in number, they must

be cleaned up. Normally an exclusion is added when performing some maintenance

on the cluster, and the exclusions should be cleaned up when the maintenance is

complete. Clusters should have no voting configuration exclusions in normal

operation.

If a node is excluded from the voting configuration because it is to be shut

down permanently, its exclusion can be removed after it is shut down and removed

from the cluster. Exclusions can also be cleared if they were created in error

or were only required temporarily by specifying ?wait_for_removal=false.

# Wait for all the nodes with voting configuration exclusions to be removed from # the cluster and then remove all the exclusions, allowing any node to return to # the voting configuration in the future. DELETE /_cluster/voting_config_exclusions # Immediately remove all the voting configuration exclusions, allowing any node # to return to the voting configuration in the future. DELETE /_cluster/voting_config_exclusions?wait_for_removal=false

On this page