- Elasticsearch Guide: other versions:

- Elasticsearch basics

- Quick starts

- Set up Elasticsearch

- Run Elasticsearch locally

- Installing Elasticsearch

- Configuring Elasticsearch

- Important Elasticsearch configuration

- Secure settings

- Auditing settings

- Circuit breaker settings

- Cluster-level shard allocation and routing settings

- Miscellaneous cluster settings

- Cross-cluster replication settings

- Discovery and cluster formation settings

- Field data cache settings

- Health Diagnostic settings

- Index lifecycle management settings

- Data stream lifecycle settings

- Index management settings

- Index recovery settings

- Indexing buffer settings

- License settings

- Local gateway settings

- Logging

- Machine learning settings

- Inference settings

- Monitoring settings

- Nodes

- Networking

- Node query cache settings

- Search settings

- Security settings

- Shard allocation, relocation, and recovery

- Shard request cache settings

- Snapshot and restore settings

- Transforms settings

- Thread pools

- Watcher settings

- Advanced configuration

- Important system configuration

- Bootstrap Checks

- Heap size check

- File descriptor check

- Memory lock check

- Maximum number of threads check

- Max file size check

- Maximum size virtual memory check

- Maximum map count check

- Client JVM check

- Use serial collector check

- System call filter check

- OnError and OnOutOfMemoryError checks

- Early-access check

- All permission check

- Discovery configuration check

- Bootstrap Checks for X-Pack

- Starting Elasticsearch

- Stopping Elasticsearch

- Discovery and cluster formation

- Add and remove nodes in your cluster

- Full-cluster restart and rolling restart

- Remote clusters

- Plugins

- Search your data

- The search API

- Sort search results

- Paginate search results

- Retrieve selected fields

- Search multiple data streams and indices

- Collapse search results

- Filter search results

- Highlighting

- Long-running searches

- Near real-time search

- Retrieve inner hits

- Search shard routing

- Searching with query rules

- Search templates

- Retrievers

- kNN search

- Semantic search

- Search across clusters

- Search with synonyms

- Search Applications

- Search analytics

- The search API

- Re-ranking

- Index modules

- Index templates

- Aliases

- Mapping

- Dynamic mapping

- Explicit mapping

- Runtime fields

- Field data types

- Aggregate metric

- Alias

- Arrays

- Binary

- Boolean

- Completion

- Date

- Date nanoseconds

- Dense vector

- Flattened

- Geopoint

- Geoshape

- Histogram

- IP

- Join

- Keyword

- Nested

- Numeric

- Object

- Percolator

- Point

- Range

- Rank feature

- Rank features

- Search-as-you-type

- Semantic text

- Shape

- Sparse vector

- Text

- Token count

- Unsigned long

- Version

- Metadata fields

- Mapping parameters

- Mapping limit settings

- Removal of mapping types

- Text analysis

- Overview

- Concepts

- Configure text analysis

- Built-in analyzer reference

- Tokenizer reference

- Token filter reference

- Apostrophe

- ASCII folding

- CJK bigram

- CJK width

- Classic

- Common grams

- Conditional

- Decimal digit

- Delimited payload

- Dictionary decompounder

- Edge n-gram

- Elision

- Fingerprint

- Flatten graph

- Hunspell

- Hyphenation decompounder

- Keep types

- Keep words

- Keyword marker

- Keyword repeat

- KStem

- Length

- Limit token count

- Lowercase

- MinHash

- Multiplexer

- N-gram

- Normalization

- Pattern capture

- Pattern replace

- Phonetic

- Porter stem

- Predicate script

- Remove duplicates

- Reverse

- Shingle

- Snowball

- Stemmer

- Stemmer override

- Stop

- Synonym

- Synonym graph

- Trim

- Truncate

- Unique

- Uppercase

- Word delimiter

- Word delimiter graph

- Character filters reference

- Normalizers

- Ingest pipelines

- Example: Parse logs

- Enrich your data

- Processor reference

- Append

- Attachment

- Bytes

- Circle

- Community ID

- Convert

- CSV

- Date

- Date index name

- Dissect

- Dot expander

- Drop

- Enrich

- Fail

- Fingerprint

- Foreach

- Geo-grid

- GeoIP

- Grok

- Gsub

- HTML strip

- Inference

- Join

- JSON

- KV

- Lowercase

- Network direction

- Pipeline

- Redact

- Registered domain

- Remove

- Rename

- Reroute

- Script

- Set

- Set security user

- Sort

- Split

- Trim

- Uppercase

- URL decode

- URI parts

- User agent

- Ingest pipelines in Search

- Data streams

- Data management

- ILM: Manage the index lifecycle

- Tutorial: Customize built-in policies

- Tutorial: Automate rollover

- Index management in Kibana

- Overview

- Concepts

- Index lifecycle actions

- Configure a lifecycle policy

- Migrate index allocation filters to node roles

- Troubleshooting index lifecycle management errors

- Start and stop index lifecycle management

- Manage existing indices

- Skip rollover

- Restore a managed data stream or index

- Data tiers

- Roll up or transform your data

- Query DSL

- EQL

- ES|QL

- SQL

- Overview

- Getting Started with SQL

- Conventions and Terminology

- Security

- SQL REST API

- SQL Translate API

- SQL CLI

- SQL JDBC

- SQL ODBC

- SQL Client Applications

- SQL Language

- Functions and Operators

- Comparison Operators

- Logical Operators

- Math Operators

- Cast Operators

- LIKE and RLIKE Operators

- Aggregate Functions

- Grouping Functions

- Date/Time and Interval Functions and Operators

- Full-Text Search Functions

- Mathematical Functions

- String Functions

- Type Conversion Functions

- Geo Functions

- Conditional Functions And Expressions

- System Functions

- Reserved keywords

- SQL Limitations

- Scripting

- Aggregations

- Bucket aggregations

- Adjacency matrix

- Auto-interval date histogram

- Categorize text

- Children

- Composite

- Date histogram

- Date range

- Diversified sampler

- Filter

- Filters

- Frequent item sets

- Geo-distance

- Geohash grid

- Geohex grid

- Geotile grid

- Global

- Histogram

- IP prefix

- IP range

- Missing

- Multi Terms

- Nested

- Parent

- Random sampler

- Range

- Rare terms

- Reverse nested

- Sampler

- Significant terms

- Significant text

- Terms

- Time series

- Variable width histogram

- Subtleties of bucketing range fields

- Metrics aggregations

- Pipeline aggregations

- Average bucket

- Bucket script

- Bucket count K-S test

- Bucket correlation

- Bucket selector

- Bucket sort

- Change point

- Cumulative cardinality

- Cumulative sum

- Derivative

- Extended stats bucket

- Inference bucket

- Max bucket

- Min bucket

- Moving function

- Moving percentiles

- Normalize

- Percentiles bucket

- Serial differencing

- Stats bucket

- Sum bucket

- Bucket aggregations

- Geospatial analysis

- Watcher

- Monitor a cluster

- Secure the Elastic Stack

- Elasticsearch security principles

- Start the Elastic Stack with security enabled automatically

- Manually configure security

- Updating node security certificates

- User authentication

- Built-in users

- Service accounts

- Internal users

- Token-based authentication services

- User profiles

- Realms

- Realm chains

- Security domains

- Active Directory user authentication

- File-based user authentication

- LDAP user authentication

- Native user authentication

- OpenID Connect authentication

- PKI user authentication

- SAML authentication

- Kerberos authentication

- JWT authentication

- Integrating with other authentication systems

- Enabling anonymous access

- Looking up users without authentication

- Controlling the user cache

- Configuring SAML single-sign-on on the Elastic Stack

- Configuring single sign-on to the Elastic Stack using OpenID Connect

- User authorization

- Built-in roles

- Defining roles

- Role restriction

- Security privileges

- Document level security

- Field level security

- Granting privileges for data streams and aliases

- Mapping users and groups to roles

- Setting up field and document level security

- Submitting requests on behalf of other users

- Configuring authorization delegation

- Customizing roles and authorization

- Enable audit logging

- Restricting connections with IP filtering

- Securing clients and integrations

- Operator privileges

- Troubleshooting

- Some settings are not returned via the nodes settings API

- Authorization exceptions

- Users command fails due to extra arguments

- Users are frequently locked out of Active Directory

- Certificate verification fails for curl on Mac

- SSLHandshakeException causes connections to fail

- Common SSL/TLS exceptions

- Common Kerberos exceptions

- Common SAML issues

- Internal Server Error in Kibana

- Setup-passwords command fails due to connection failure

- Failures due to relocation of the configuration files

- Limitations

- Set up a cluster for high availability

- How to

- Autoscaling

- Snapshot and restore

- REST APIs

- API conventions

- Common options

- REST API compatibility

- Autoscaling APIs

- Behavioral Analytics APIs

- Compact and aligned text (CAT) APIs

- cat aliases

- cat allocation

- cat anomaly detectors

- cat component templates

- cat count

- cat data frame analytics

- cat datafeeds

- cat fielddata

- cat health

- cat indices

- cat master

- cat nodeattrs

- cat nodes

- cat pending tasks

- cat plugins

- cat recovery

- cat repositories

- cat segments

- cat shards

- cat snapshots

- cat task management

- cat templates

- cat thread pool

- cat trained model

- cat transforms

- Cluster APIs

- Cluster allocation explain

- Cluster get settings

- Cluster health

- Health

- Cluster reroute

- Cluster state

- Cluster stats

- Cluster update settings

- Nodes feature usage

- Nodes hot threads

- Nodes info

- Prevalidate node removal

- Nodes reload secure settings

- Nodes stats

- Cluster Info

- Pending cluster tasks

- Remote cluster info

- Task management

- Voting configuration exclusions

- Create or update desired nodes

- Get desired nodes

- Delete desired nodes

- Get desired balance

- Reset desired balance

- Cross-cluster replication APIs

- Connector APIs

- Create connector

- Delete connector

- Get connector

- List connectors

- Update connector API key id

- Update connector configuration

- Update connector index name

- Update connector features

- Update connector filtering

- Update connector name and description

- Update connector pipeline

- Update connector scheduling

- Update connector service type

- Create connector sync job

- Cancel connector sync job

- Delete connector sync job

- Get connector sync job

- List connector sync jobs

- Check in a connector

- Update connector error

- Update connector last sync stats

- Update connector status

- Check in connector sync job

- Set connector sync job error

- Set connector sync job stats

- Data stream APIs

- Document APIs

- Enrich APIs

- EQL APIs

- ES|QL APIs

- Features APIs

- Fleet APIs

- Graph explore API

- Index APIs

- Alias exists

- Aliases

- Analyze

- Analyze index disk usage

- Clear cache

- Clone index

- Close index

- Create index

- Create or update alias

- Create or update component template

- Create or update index template

- Create or update index template (legacy)

- Delete component template

- Delete dangling index

- Delete alias

- Delete index

- Delete index template

- Delete index template (legacy)

- Exists

- Field usage stats

- Flush

- Force merge

- Get alias

- Get component template

- Get field mapping

- Get index

- Get index settings

- Get index template

- Get index template (legacy)

- Get mapping

- Import dangling index

- Index recovery

- Index segments

- Index shard stores

- Index stats

- Index template exists (legacy)

- List dangling indices

- Open index

- Refresh

- Resolve index

- Resolve cluster

- Rollover

- Shrink index

- Simulate index

- Simulate template

- Split index

- Unfreeze index

- Update index settings

- Update mapping

- Index lifecycle management APIs

- Create or update lifecycle policy

- Get policy

- Delete policy

- Move to step

- Remove policy

- Retry policy

- Get index lifecycle management status

- Explain lifecycle

- Start index lifecycle management

- Stop index lifecycle management

- Migrate indices, ILM policies, and legacy, composable and component templates to data tiers routing

- Inference APIs

- Delete inference API

- Get inference API

- Perform inference API

- Create inference API

- Amazon Bedrock inference service

- Anthropic inference service

- Azure AI studio inference service

- Azure OpenAI inference service

- Cohere inference service

- Elasticsearch inference service

- ELSER inference service

- Google AI Studio inference service

- Google Vertex AI inference service

- HuggingFace inference service

- Mistral inference service

- OpenAI inference service

- Info API

- Ingest APIs

- Licensing APIs

- Logstash APIs

- Machine learning APIs

- Machine learning anomaly detection APIs

- Add events to calendar

- Add jobs to calendar

- Close jobs

- Create jobs

- Create calendars

- Create datafeeds

- Create filters

- Delete calendars

- Delete datafeeds

- Delete events from calendar

- Delete filters

- Delete forecasts

- Delete jobs

- Delete jobs from calendar

- Delete model snapshots

- Delete expired data

- Estimate model memory

- Flush jobs

- Forecast jobs

- Get buckets

- Get calendars

- Get categories

- Get datafeeds

- Get datafeed statistics

- Get influencers

- Get jobs

- Get job statistics

- Get model snapshots

- Get model snapshot upgrade statistics

- Get overall buckets

- Get scheduled events

- Get filters

- Get records

- Open jobs

- Post data to jobs

- Preview datafeeds

- Reset jobs

- Revert model snapshots

- Start datafeeds

- Stop datafeeds

- Update datafeeds

- Update filters

- Update jobs

- Update model snapshots

- Upgrade model snapshots

- Machine learning data frame analytics APIs

- Create data frame analytics jobs

- Delete data frame analytics jobs

- Evaluate data frame analytics

- Explain data frame analytics

- Get data frame analytics jobs

- Get data frame analytics jobs stats

- Preview data frame analytics

- Start data frame analytics jobs

- Stop data frame analytics jobs

- Update data frame analytics jobs

- Machine learning trained model APIs

- Clear trained model deployment cache

- Create or update trained model aliases

- Create part of a trained model

- Create trained models

- Create trained model vocabulary

- Delete trained model aliases

- Delete trained models

- Get trained models

- Get trained models stats

- Infer trained model

- Start trained model deployment

- Stop trained model deployment

- Update trained model deployment

- Migration APIs

- Node lifecycle APIs

- Query rules APIs

- Reload search analyzers API

- Repositories metering APIs

- Rollup APIs

- Root API

- Script APIs

- Search APIs

- Search Application APIs

- Searchable snapshots APIs

- Security APIs

- Authenticate

- Change passwords

- Clear cache

- Clear roles cache

- Clear privileges cache

- Clear API key cache

- Clear service account token caches

- Create API keys

- Create or update application privileges

- Create or update role mappings

- Create or update roles

- Bulk create or update roles API

- Bulk delete roles API

- Create or update users

- Create service account tokens

- Delegate PKI authentication

- Delete application privileges

- Delete role mappings

- Delete roles

- Delete service account token

- Delete users

- Disable users

- Enable users

- Enroll Kibana

- Enroll node

- Get API key information

- Get application privileges

- Get builtin privileges

- Get role mappings

- Get roles

- Query Role

- Get service accounts

- Get service account credentials

- Get Security settings

- Get token

- Get user privileges

- Get users

- Grant API keys

- Has privileges

- Invalidate API key

- Invalidate token

- OpenID Connect prepare authentication

- OpenID Connect authenticate

- OpenID Connect logout

- Query API key information

- Query User

- Update API key

- Update Security settings

- Bulk update API keys

- SAML prepare authentication

- SAML authenticate

- SAML logout

- SAML invalidate

- SAML complete logout

- SAML service provider metadata

- SSL certificate

- Activate user profile

- Disable user profile

- Enable user profile

- Get user profiles

- Suggest user profile

- Update user profile data

- Has privileges user profile

- Create Cross-Cluster API key

- Update Cross-Cluster API key

- Snapshot and restore APIs

- Snapshot lifecycle management APIs

- SQL APIs

- Synonyms APIs

- Text structure APIs

- Transform APIs

- Usage API

- Watcher APIs

- Definitions

- Command line tools

- elasticsearch-certgen

- elasticsearch-certutil

- elasticsearch-create-enrollment-token

- elasticsearch-croneval

- elasticsearch-keystore

- elasticsearch-node

- elasticsearch-reconfigure-node

- elasticsearch-reset-password

- elasticsearch-saml-metadata

- elasticsearch-service-tokens

- elasticsearch-setup-passwords

- elasticsearch-shard

- elasticsearch-syskeygen

- elasticsearch-users

- Troubleshooting

- Fix common cluster issues

- Diagnose unassigned shards

- Add a missing tier to the system

- Allow Elasticsearch to allocate the data in the system

- Allow Elasticsearch to allocate the index

- Indices mix index allocation filters with data tiers node roles to move through data tiers

- Not enough nodes to allocate all shard replicas

- Total number of shards for an index on a single node exceeded

- Total number of shards per node has been reached

- Troubleshooting corruption

- Fix data nodes out of disk

- Fix master nodes out of disk

- Fix other role nodes out of disk

- Start index lifecycle management

- Start Snapshot Lifecycle Management

- Restore from snapshot

- Troubleshooting broken repositories

- Addressing repeated snapshot policy failures

- Troubleshooting an unstable cluster

- Troubleshooting discovery

- Troubleshooting monitoring

- Troubleshooting transforms

- Troubleshooting Watcher

- Troubleshooting searches

- Troubleshooting shards capacity health issues

- Troubleshooting an unbalanced cluster

- Capture diagnostics

- Upgrade Elasticsearch

- Migration guide

- What’s new in 8.15

- Release notes

- Elasticsearch version 8.15.5

- Elasticsearch version 8.15.4

- Elasticsearch version 8.15.3

- Elasticsearch version 8.15.2

- Elasticsearch version 8.15.1

- Elasticsearch version 8.15.0

- Elasticsearch version 8.14.3

- Elasticsearch version 8.14.2

- Elasticsearch version 8.14.1

- Elasticsearch version 8.14.0

- Elasticsearch version 8.13.4

- Elasticsearch version 8.13.3

- Elasticsearch version 8.13.2

- Elasticsearch version 8.13.1

- Elasticsearch version 8.13.0

- Elasticsearch version 8.12.2

- Elasticsearch version 8.12.1

- Elasticsearch version 8.12.0

- Elasticsearch version 8.11.4

- Elasticsearch version 8.11.3

- Elasticsearch version 8.11.2

- Elasticsearch version 8.11.1

- Elasticsearch version 8.11.0

- Elasticsearch version 8.10.4

- Elasticsearch version 8.10.3

- Elasticsearch version 8.10.2

- Elasticsearch version 8.10.1

- Elasticsearch version 8.10.0

- Elasticsearch version 8.9.2

- Elasticsearch version 8.9.1

- Elasticsearch version 8.9.0

- Elasticsearch version 8.8.2

- Elasticsearch version 8.8.1

- Elasticsearch version 8.8.0

- Elasticsearch version 8.7.1

- Elasticsearch version 8.7.0

- Elasticsearch version 8.6.2

- Elasticsearch version 8.6.1

- Elasticsearch version 8.6.0

- Elasticsearch version 8.5.3

- Elasticsearch version 8.5.2

- Elasticsearch version 8.5.1

- Elasticsearch version 8.5.0

- Elasticsearch version 8.4.3

- Elasticsearch version 8.4.2

- Elasticsearch version 8.4.1

- Elasticsearch version 8.4.0

- Elasticsearch version 8.3.3

- Elasticsearch version 8.3.2

- Elasticsearch version 8.3.1

- Elasticsearch version 8.3.0

- Elasticsearch version 8.2.3

- Elasticsearch version 8.2.2

- Elasticsearch version 8.2.1

- Elasticsearch version 8.2.0

- Elasticsearch version 8.1.3

- Elasticsearch version 8.1.2

- Elasticsearch version 8.1.1

- Elasticsearch version 8.1.0

- Elasticsearch version 8.0.1

- Elasticsearch version 8.0.0

- Elasticsearch version 8.0.0-rc2

- Elasticsearch version 8.0.0-rc1

- Elasticsearch version 8.0.0-beta1

- Elasticsearch version 8.0.0-alpha2

- Elasticsearch version 8.0.0-alpha1

- Dependencies and versions

Cluster-level shard allocation and routing settings

editCluster-level shard allocation and routing settings

editShard allocation is the process of assigning shard copies to nodes. This can happen during initial recovery, replica allocation, rebalancing, when nodes are added to or removed from the cluster, or when cluster or index settings that impact allocation are updated.

One of the main roles of the master is to decide which shards to allocate to which nodes, and when to move shards between nodes in order to rebalance the cluster.

There are a number of settings available to control the shard allocation process:

- Cluster-level shard allocation settings control allocation and rebalancing operations.

- Disk-based shard allocation settings explains how Elasticsearch takes available disk space into account, and the related settings.

- Shard allocation awareness and Forced awareness control how shards can be distributed across different racks or availability zones.

- Cluster-level shard allocation filtering allows certain nodes or groups of nodes excluded from allocation so that they can be decommissioned.

Besides these, there are a few other miscellaneous cluster-level settings.

Cluster-level shard allocation settings

editYou can use the following settings to control shard allocation and recovery:

-

cluster.routing.allocation.enable -

(Dynamic) Enable or disable allocation for specific kinds of shards:

-

all- (default) Allows shard allocation for all kinds of shards. -

primaries- Allows shard allocation only for primary shards. -

new_primaries- Allows shard allocation only for primary shards for new indices. -

none- No shard allocations of any kind are allowed for any indices.

This setting does not affect the recovery of local primary shards when restarting a node. A restarted node that has a copy of an unassigned primary shard will recover that primary immediately, assuming that its allocation id matches one of the active allocation ids in the cluster state.

-

-

cluster.routing.allocation.same_shard.host -

(Dynamic)

If

true, forbids multiple copies of a shard from being allocated to distinct nodes on the same host, i.e. which have the same network address. Defaults tofalse, meaning that copies of a shard may sometimes be allocated to nodes on the same host. This setting is only relevant if you run multiple nodes on each host. -

cluster.routing.allocation.node_concurrent_incoming_recoveries -

(Dynamic)

How many concurrent incoming shard recoveries are allowed to happen on a

node. Incoming recoveries are the recoveries where the target shard (most

likely the replica unless a shard is relocating) is allocated on the node.

Defaults to

2. Increasing this setting may cause shard movements to have a performance impact on other activity in your cluster, but may not make shard movements complete noticeably sooner. We do not recommend adjusting this setting from its default of2. -

cluster.routing.allocation.node_concurrent_outgoing_recoveries -

(Dynamic)

How many concurrent outgoing shard recoveries are allowed to happen on a

node. Outgoing recoveries are the recoveries where the source shard (most

likely the primary unless a shard is relocating) is allocated on the node.

Defaults to

2. Increasing this setting may cause shard movements to have a performance impact on other activity in your cluster, but may not make shard movements complete noticeably sooner. We do not recommend adjusting this setting from its default of2. -

cluster.routing.allocation.node_concurrent_recoveries -

(Dynamic)

A shortcut to set both

cluster.routing.allocation.node_concurrent_incoming_recoveriesandcluster.routing.allocation.node_concurrent_outgoing_recoveries. The value of this setting takes effect only when the more specific setting is not configured. Defaults to2. Increasing this setting may cause shard movements to have a performance impact on other activity in your cluster, but may not make shard movements complete noticeably sooner. We do not recommend adjusting this setting from its default of2. -

cluster.routing.allocation.node_initial_primaries_recoveries -

(Dynamic)

While the recovery of replicas happens over the network, the recovery of

an unassigned primary after node restart uses data from the local disk.

These should be fast so more initial primary recoveries can happen in

parallel on each node. Defaults to

4. Increasing this setting may cause shard recoveries to have a performance impact on other activity in your cluster, but may not make shard recoveries complete noticeably sooner. We do not recommend adjusting this setting from its default of4.

Shard rebalancing settings

editA cluster is balanced when it has an equal number of shards on each node, with all nodes needing equal resources, without having a concentration of shards from any index on any node. Elasticsearch runs an automatic process called rebalancing which moves shards between the nodes in your cluster to improve its balance. Rebalancing obeys all other shard allocation rules such as allocation filtering and forced awareness which may prevent it from completely balancing the cluster. In that case, rebalancing strives to achieve the most balanced cluster possible within the rules you have configured. If you are using data tiers then Elasticsearch automatically applies allocation filtering rules to place each shard within the appropriate tier. These rules mean that the balancer works independently within each tier.

You can use the following settings to control the rebalancing of shards across the cluster:

-

cluster.routing.allocation.allow_rebalance -

(Dynamic) Specify when shard rebalancing is allowed:

-

always- Always allow rebalancing. -

indices_primaries_active- Only when all primaries in the cluster are allocated. -

indices_all_active- (default) Only when all shards (primaries and replicas) in the cluster are allocated.

-

-

cluster.routing.rebalance.enable -

(Dynamic) Enable or disable rebalancing for specific kinds of shards:

-

all- (default) Allows shard balancing for all kinds of shards. -

primaries- Allows shard balancing only for primary shards. -

replicas- Allows shard balancing only for replica shards. -

none- No shard balancing of any kind are allowed for any indices.

Rebalancing is important to ensure the cluster returns to a healthy and fully resilient state after a disruption. If you adjust this setting, remember to set it back to

allas soon as possible. -

-

cluster.routing.allocation.cluster_concurrent_rebalance -

(Dynamic)

Defines the number of concurrent shard rebalances are allowed across the whole

cluster. Defaults to

2. Note that this setting only controls the number of concurrent shard relocations due to imbalances in the cluster. This setting does not limit shard relocations due to allocation filtering or forced awareness. Increasing this setting may cause the cluster to use additional resources moving shards between nodes, so we generally do not recommend adjusting this setting from its default of2. -

cluster.routing.allocation.type -

Selects the algorithm used for computing the cluster balance. Defaults to

desired_balancewhich selects the desired balance allocator. This allocator runs a background task which computes the desired balance of shards in the cluster. Once this background task completes, Elasticsearch moves shards to their desired locations.[8.8] Deprecated in 8.8. The

balancedallocator type is deprecated and no longer recommended May also be set tobalancedto select the legacy balanced allocator. This allocator was the default allocator in versions of Elasticsearch before 8.6.0. It runs in the foreground, preventing the master from doing other work in parallel. It works by selecting a small number of shard movements which immediately improve the balance of the cluster, and when those shard movements complete it runs again and selects another few shards to move. Since this allocator makes its decisions based only on the current state of the cluster, it will sometimes move a shard several times while balancing the cluster.

Shard balancing heuristics settings

editRebalancing works by computing a weight for each node based on its allocation of shards, and then moving shards between nodes to reduce the weight of the heavier nodes and increase the weight of the lighter ones. The cluster is balanced when there is no possible shard movement that can bring the weight of any node closer to the weight of any other node by more than a configurable threshold.

The weight of a node depends on the number of shards it holds and on the total estimated resource usage of those shards expressed in terms of the size of the shard on disk and the number of threads needed to support write traffic to the shard. Elasticsearch estimates the resource usage of shards belonging to data streams when they are created by a rollover. The estimated disk size of the new shard is the mean size of the other shards in the data stream. The estimated write load of the new shard is a weighted average of the actual write loads of recent shards in the data stream. Shards that do not belong to the write index of a data stream have an estimated write load of zero.

The following settings control how Elasticsearch combines these values into an overall measure of each node’s weight.

-

cluster.routing.allocation.balance.threshold -

(float, Dynamic)

The minimum improvement in weight which triggers a rebalancing shard movement.

Defaults to

1.0f. Raising this value will cause Elasticsearch to stop rebalancing shards sooner, leaving the cluster in a more unbalanced state. -

cluster.routing.allocation.balance.shard -

(float, Dynamic)

Defines the weight factor for the total number of shards allocated to each node.

Defaults to

0.45f. Raising this value increases the tendency of Elasticsearch to equalize the total number of shards across nodes ahead of the other balancing variables. -

cluster.routing.allocation.balance.index -

(float, Dynamic)

Defines the weight factor for the number of shards per index allocated to each

node. Defaults to

0.55f. Raising this value increases the tendency of Elasticsearch to equalize the number of shards of each index across nodes ahead of the other balancing variables. -

cluster.routing.allocation.balance.disk_usage -

(float, Dynamic)

Defines the weight factor for balancing shards according to their predicted disk

size in bytes. Defaults to

2e-11f. Raising this value increases the tendency of Elasticsearch to equalize the total disk usage across nodes ahead of the other balancing variables. -

cluster.routing.allocation.balance.write_load -

(float, Dynamic)

Defines the weight factor for the write load of each shard, in terms of the

estimated number of indexing threads needed by the shard. Defaults to

10.0f. Raising this value increases the tendency of Elasticsearch to equalize the total write load across nodes ahead of the other balancing variables.

-

If you have a large cluster, it may be unnecessary to keep it in

a perfectly balanced state at all times. It is less resource-intensive for the

cluster to operate in a somewhat unbalanced state rather than to perform all

the shard movements needed to achieve the perfect balance. If so, increase the

value of

cluster.routing.allocation.balance.thresholdto define the acceptable imbalance between nodes. For instance, if you have an average of 500 shards per node and can accept a difference of 5% (25 typical shards) between nodes, setcluster.routing.allocation.balance.thresholdto25. - We do not recommend adjusting the values of the heuristic weight factor settings. The default values work well in all reasonable clusters. Although different values may improve the current balance in some ways, it is possible that they will create unexpected problems in the future or prevent it from gracefully handling an unexpected disruption.

- Regardless of the result of the balancing algorithm, rebalancing might not be allowed due to allocation rules such as forced awareness and allocation filtering. Use the Cluster allocation explain API to explain the current allocation of shards.

Disk-based shard allocation settings

editThe disk-based shard allocator ensures that all nodes have enough disk space without performing more shard movements than necessary. It allocates shards based on a pair of thresholds known as the low watermark and the high watermark. Its primary goal is to ensure that no node exceeds the high watermark, or at least that any such overage is only temporary. If a node exceeds the high watermark then Elasticsearch will solve this by moving some of its shards onto other nodes in the cluster.

It is normal for nodes to temporarily exceed the high watermark from time to time.

The allocator also tries to keep nodes clear of the high watermark by forbidding the allocation of more shards to a node that exceeds the low watermark. Importantly, if all of your nodes have exceeded the low watermark then no new shards can be allocated and Elasticsearch will not be able to move any shards between nodes in order to keep the disk usage below the high watermark. You must ensure that your cluster has enough disk space in total and that there are always some nodes below the low watermark.

Shard movements triggered by the disk-based shard allocator must also satisfy all other shard allocation rules such as allocation filtering and forced awareness. If these rules are too strict then they can also prevent the shard movements needed to keep the nodes' disk usage under control. If you are using data tiers then Elasticsearch automatically configures allocation filtering rules to place shards within the appropriate tier, which means that the disk-based shard allocator works independently within each tier.

If a node is filling up its disk faster than Elasticsearch can move shards elsewhere then there is a risk that the disk will completely fill up. To prevent this, as a last resort, once the disk usage reaches the flood-stage watermark Elasticsearch will block writes to indices with a shard on the affected node. It will also continue to move shards onto the other nodes in the cluster. When disk usage on the affected node drops below the high watermark, Elasticsearch automatically removes the write block. Refer to Fix watermark errors to resolve persistent watermark errors.

It is normal for the nodes in your cluster to be using very different amounts of disk space. The balance of the cluster depends on a combination of factors which includes the number of shards on each node, the indices to which those shards belong, and the resource needs of each shard in terms of its size on disk and its CPU usage. Elasticsearch must trade off all of these factors against each other, and a cluster which is balanced when looking at the combination of all of these factors may not appear to be balanced if you focus attention on just one of them.

You can use the following settings to control disk-based allocation:

-

cluster.routing.allocation.disk.threshold_enabled -

(Dynamic)

Defaults to

true. Set tofalseto disable the disk allocation decider. Upon disabling, it will also remove any existingindex.blocks.read_only_allow_deleteindex blocks.

-

cluster.routing.allocation.disk.watermark.low -

(Dynamic)

Controls the low watermark for disk usage. It defaults to

85%, meaning that Elasticsearch will not allocate shards to nodes that have more than 85% disk used. It can alternatively be set to a ratio value, e.g.,0.85. It can also be set to an absolute byte value (like500mb) to prevent Elasticsearch from allocating shards if less than the specified amount of space is available. This setting has no effect on the primary shards of newly-created indices but will prevent their replicas from being allocated. -

cluster.routing.allocation.disk.watermark.low.max_headroom -

(Dynamic) Controls the max headroom for the low watermark (in case of a percentage/ratio value).

Defaults to 200GB when

cluster.routing.allocation.disk.watermark.lowis not explicitly set. This caps the amount of free space required.

-

cluster.routing.allocation.disk.watermark.high -

(Dynamic)

Controls the high watermark. It defaults to

90%, meaning that Elasticsearch will attempt to relocate shards away from a node whose disk usage is above 90%. It can alternatively be set to a ratio value, e.g.,0.9. It can also be set to an absolute byte value (similarly to the low watermark) to relocate shards away from a node if it has less than the specified amount of free space. This setting affects the allocation of all shards, whether previously allocated or not. -

cluster.routing.allocation.disk.watermark.high.max_headroom -

(Dynamic) Controls the max headroom for the high watermark (in case of a percentage/ratio value).

Defaults to 150GB when

cluster.routing.allocation.disk.watermark.highis not explicitly set. This caps the amount of free space required. -

cluster.routing.allocation.disk.watermark.enable_for_single_data_node -

(Static)

In earlier releases, the default behaviour was to disregard disk watermarks for a single

data node cluster when making an allocation decision. This is deprecated behavior

since 7.14 and has been removed in 8.0. The only valid value for this setting

is now

true. The setting will be removed in a future release.

-

cluster.routing.allocation.disk.watermark.flood_stage -

(Dynamic) Controls the flood stage watermark, which defaults to 95%. Elasticsearch enforces a read-only index block (

index.blocks.read_only_allow_delete) on every index that has one or more shards allocated on the node, and that has at least one disk exceeding the flood stage. This setting is a last resort to prevent nodes from running out of disk space. The index block is automatically released when the disk utilization falls below the high watermark. Similarly to the low and high watermark values, it can alternatively be set to a ratio value, e.g.,0.95, or an absolute byte value.An example of resetting the read-only index block on the

my-index-000001index:resp = client.indices.put_settings( index="my-index-000001", settings={ "index.blocks.read_only_allow_delete": None }, ) print(resp)

response = client.indices.put_settings( index: 'my-index-000001', body: { 'index.blocks.read_only_allow_delete' => nil } ) puts response

PUT /my-index-000001/_settings { "index.blocks.read_only_allow_delete": null }

-

cluster.routing.allocation.disk.watermark.flood_stage.max_headroom -

(Dynamic) Controls the max headroom for the flood stage watermark (in case of a percentage/ratio value).

Defaults to 100GB when

cluster.routing.allocation.disk.watermark.flood_stageis not explicitly set. This caps the amount of free space required.

You cannot mix the usage of percentage/ratio values and byte values across

the cluster.routing.allocation.disk.watermark.low, cluster.routing.allocation.disk.watermark.high,

and cluster.routing.allocation.disk.watermark.flood_stage settings. Either all values

are set to percentage/ratio values, or all are set to byte values. This enforcement is

so that Elasticsearch can validate that the settings are internally consistent, ensuring that the

low disk threshold is less than the high disk threshold, and the high disk threshold is

less than the flood stage threshold. A similar comparison check is done for the max

headroom values.

-

cluster.routing.allocation.disk.watermark.flood_stage.frozen - (Dynamic) Controls the flood stage watermark for dedicated frozen nodes, which defaults to 95%.

-

cluster.routing.allocation.disk.watermark.flood_stage.frozen.max_headroom -

(Dynamic)

Controls the max headroom for the flood stage watermark (in case of a

percentage/ratio value) for dedicated frozen nodes. Defaults to 20GB when

cluster.routing.allocation.disk.watermark.flood_stage.frozenis not explicitly set. This caps the amount of free space required on dedicated frozen nodes. -

cluster.info.update.interval -

(Dynamic)

How often Elasticsearch should check on disk usage for each node in the

cluster. Defaults to

30s.

Percentage values refer to used disk space, while byte values refer to free disk space. This can be confusing, since it flips the meaning of high and low. For example, it makes sense to set the low watermark to 10gb and the high watermark to 5gb, but not the other way around.

An example of updating the low watermark to at least 100 gigabytes free, a high watermark of at least 50 gigabytes free, and a flood stage watermark of 10 gigabytes free, and updating the information about the cluster every minute:

resp = client.cluster.put_settings( persistent={ "cluster.routing.allocation.disk.watermark.low": "100gb", "cluster.routing.allocation.disk.watermark.high": "50gb", "cluster.routing.allocation.disk.watermark.flood_stage": "10gb", "cluster.info.update.interval": "1m" }, ) print(resp)

response = client.cluster.put_settings( body: { persistent: { 'cluster.routing.allocation.disk.watermark.low' => '100gb', 'cluster.routing.allocation.disk.watermark.high' => '50gb', 'cluster.routing.allocation.disk.watermark.flood_stage' => '10gb', 'cluster.info.update.interval' => '1m' } } ) puts response

PUT _cluster/settings { "persistent": { "cluster.routing.allocation.disk.watermark.low": "100gb", "cluster.routing.allocation.disk.watermark.high": "50gb", "cluster.routing.allocation.disk.watermark.flood_stage": "10gb", "cluster.info.update.interval": "1m" } }

Concerning the max headroom settings for the watermarks, please note that these apply only in the case that the watermark settings are percentages/ratios. The aim of a max headroom value is to cap the required free disk space before hitting the respective watermark. This is especially useful for servers with larger disks, where a percentage/ratio watermark could translate to a big free disk space requirement, and the max headroom can be used to cap the required free disk space amount. As an example, let us take the default settings for the flood watermark. It has a 95% default value, and the flood max headroom setting has a default value of 100GB. This means that:

- For a smaller disk, e.g., of 100GB, the flood watermark will hit at 95%, meaning at 5GB of free space, since 5GB is smaller than the 100GB max headroom value.

- For a larger disk, e.g., of 100TB, the flood watermark will hit at 100GB of free space. That is because the 95% flood watermark alone would require 5TB of free disk space, but that is capped by the max headroom setting to 100GB.

Finally, the max headroom settings have their default values only if their respective watermark settings are not explicitly set (thus, they have their default percentage values). If watermarks are explicitly set, then the max headroom settings do not have their default values, and would need to be explicitly set if they are desired.

Shard allocation awareness

editYou can use custom node attributes as awareness attributes to enable Elasticsearch to take your physical hardware configuration into account when allocating shards. If Elasticsearch knows which nodes are on the same physical server, in the same rack, or in the same zone, it can distribute the primary shard and its replica shards to minimize the risk of losing all shard copies in the event of a failure.

When shard allocation awareness is enabled with the

dynamic

cluster.routing.allocation.awareness.attributes setting, shards are only

allocated to nodes that have values set for the specified awareness attributes.

If you use multiple awareness attributes, Elasticsearch considers each attribute

separately when allocating shards.

The number of attribute values determines how many shard copies are allocated in each location. If the number of nodes in each location is unbalanced and there are a lot of replicas, replica shards might be left unassigned.

Learn more about designing resilient clusters.

Enabling shard allocation awareness

editTo enable shard allocation awareness:

-

Specify the location of each node with a custom node attribute. For example, if you want Elasticsearch to distribute shards across different racks, you might use an awareness attribute called

rack_id.You can set custom attributes in two ways:

-

By editing the

elasticsearch.ymlconfig file:node.attr.rack_id: rack_one

-

Using the

-Ecommand line argument when you start a node:./bin/elasticsearch -Enode.attr.rack_id=rack_one

-

-

Tell Elasticsearch to take one or more awareness attributes into account when allocating shards by setting

cluster.routing.allocation.awareness.attributesin every master-eligible node’selasticsearch.ymlconfig file.You can also use the cluster-update-settings API to set or update a cluster’s awareness attributes:

resp = client.cluster.put_settings( persistent={ "cluster.routing.allocation.awareness.attributes": "rack_id" }, ) print(resp)

response = client.cluster.put_settings( body: { persistent: { 'cluster.routing.allocation.awareness.attributes' => 'rack_id' } } ) puts response

const response = await client.cluster.putSettings({ persistent: { "cluster.routing.allocation.awareness.attributes": "rack_id", }, }); console.log(response);

PUT /_cluster/settings { "persistent" : { "cluster.routing.allocation.awareness.attributes" : "rack_id" } }

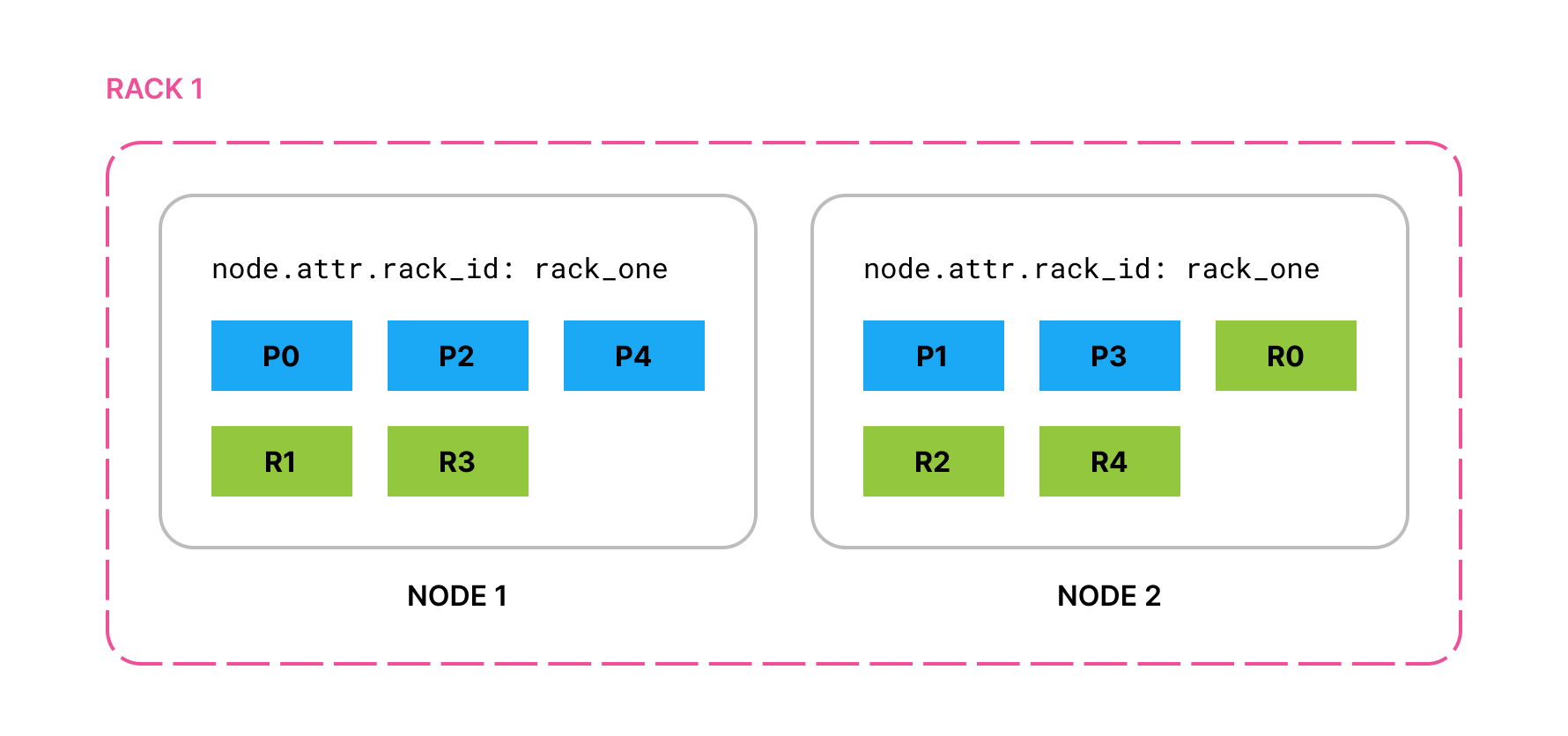

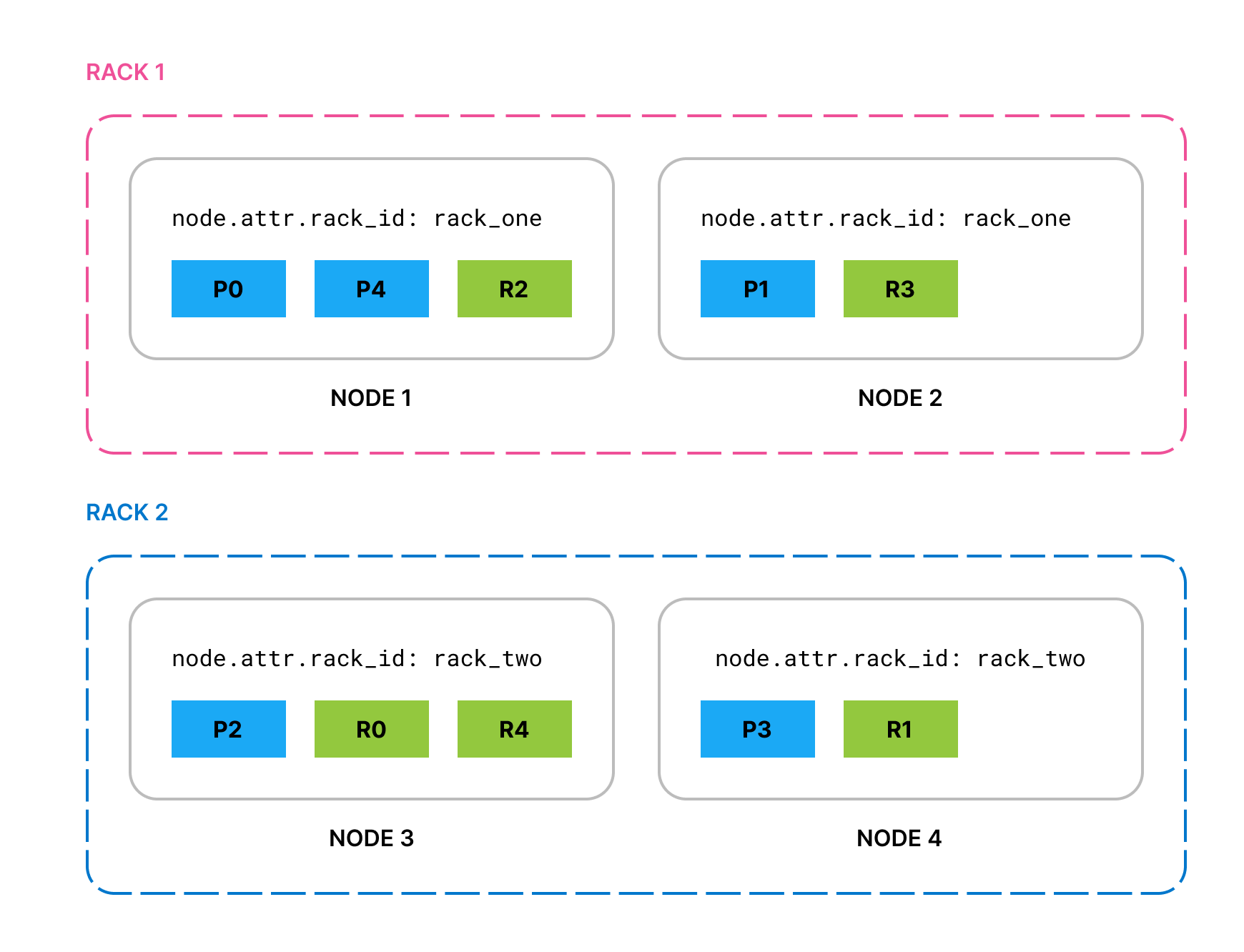

With this example configuration, if you start two nodes with

node.attr.rack_id set to rack_one and create an index with 5 primary

shards and 1 replica of each primary, all primaries and replicas are

allocated across the two node.

If you add two nodes with node.attr.rack_id set to rack_two,

Elasticsearch moves shards to the new nodes, ensuring (if possible)

that no two copies of the same shard are in the same rack.

If rack_two fails and takes down both its nodes, by default Elasticsearch

allocates the lost shard copies to nodes in rack_one. To prevent multiple

copies of a particular shard from being allocated in the same location, you can

enable forced awareness.

Forced awareness

editBy default, if one location fails, Elasticsearch spreads its shards across the remaining locations. This might be undesirable if the cluster does not have sufficient resources to host all its shards when one location is missing.

To prevent the remaining locations from being overloaded in the event of a

whole-location failure, specify the attribute values that should exist with the

cluster.routing.allocation.awareness.force.* settings. This will mean that

Elasticsearch will prefer to leave some replicas unassigned in the event of a

whole-location failure instead of overloading the nodes in the remaining

locations.

For example, if you have an awareness attribute called zone and configure

nodes in zone1 and zone2, you can use forced awareness to make Elasticsearch leave

half of your shard copies unassigned if only one zone is available:

cluster.routing.allocation.awareness.attributes: zone cluster.routing.allocation.awareness.force.zone.values: zone1,zone2

With this example configuration, if you have two nodes with node.attr.zone

set to zone1 and an index with number_of_replicas set to 1, Elasticsearch

allocates all the primary shards but none of the replicas. It will assign the

replica shards once nodes with a different value for node.attr.zone join the

cluster. In contrast, if you do not configure forced awareness, Elasticsearch will

allocate all primaries and replicas to the two nodes even though they are in

the same zone.

Cluster-level shard allocation filtering

editYou can use cluster-level shard allocation filters to control where Elasticsearch allocates shards from any index. These cluster wide filters are applied in conjunction with per-index allocation filtering and allocation awareness.

Shard allocation filters can be based on custom node attributes or the built-in

_name, _host_ip, _publish_ip, _ip, _host, _id and _tier attributes.

The cluster.routing.allocation settings are dynamic, enabling live indices to

be moved from one set of nodes to another. Shards are only relocated if it is

possible to do so without breaking another routing constraint, such as never

allocating a primary and replica shard on the same node.

The most common use case for cluster-level shard allocation filtering is when you want to decommission a node. To move shards off of a node prior to shutting it down, you could create a filter that excludes the node by its IP address:

resp = client.cluster.put_settings( persistent={ "cluster.routing.allocation.exclude._ip": "10.0.0.1" }, ) print(resp)

response = client.cluster.put_settings( body: { persistent: { 'cluster.routing.allocation.exclude._ip' => '10.0.0.1' } } ) puts response

const response = await client.cluster.putSettings({ persistent: { "cluster.routing.allocation.exclude._ip": "10.0.0.1", }, }); console.log(response);

PUT _cluster/settings { "persistent" : { "cluster.routing.allocation.exclude._ip" : "10.0.0.1" } }

Cluster routing settings

edit-

cluster.routing.allocation.include.{attribute} -

(Dynamic)

Allocate shards to a node whose

{attribute}has at least one of the comma-separated values. -

cluster.routing.allocation.require.{attribute} -

(Dynamic)

Only allocate shards to a node whose

{attribute}has all of the comma-separated values. -

cluster.routing.allocation.exclude.{attribute} -

(Dynamic)

Do not allocate shards to a node whose

{attribute}has any of the comma-separated values.

The cluster allocation settings support the following built-in attributes:

|

|

Match nodes by node name |

|

|

Match nodes by host IP address (IP associated with hostname) |

|

|

Match nodes by publish IP address |

|

|

Match either |

|

|

Match nodes by hostname |

|

|

Match nodes by node id |

|

|

Match nodes by the node’s data tier role |

You can use wildcards when specifying attribute values, for example:

resp = client.cluster.put_settings( persistent={ "cluster.routing.allocation.exclude._ip": "192.168.2.*" }, ) print(resp)

response = client.cluster.put_settings( body: { persistent: { 'cluster.routing.allocation.exclude._ip' => '192.168.2.*' } } ) puts response

const response = await client.cluster.putSettings({ persistent: { "cluster.routing.allocation.exclude._ip": "192.168.2.*", }, }); console.log(response);

PUT _cluster/settings { "persistent": { "cluster.routing.allocation.exclude._ip": "192.168.2.*" } }

On this page